In last night’s #lrnchat, the topic was virtual worlds (VWs). This was largely because several of the organizers had recently attended one or another of the SRI/ADL meetings on the topic, but also because one of the organizers (@KoreenOlbrish) is majorly active in the business of virtual worlds for learning through her company Tandem Learning. It was a lively session, as always.

The first question to be addressed was whether virtual worlds had been over or underhyped. The question isn’t one or the other, of course. Some felt underhyped, as there’s great potential. Others thought they’d been overhyped, as there’s lots of noise, but few real examples. Both are true, of course. Everyone pretty much derided the presentation of powerpoints in Second Life, however (and rightly so!).

The second question explored when and where virtual worlds make sense. Others echoed my prevailing view that VW’s are best for inherently 3D and social environments. Some interesting nuances came in exploring the thought that that 3D doesn’t have to be our scale, but we can do micro or macro 3D explorations as well, and not just distance, but also time. Imagine exploring a slowed down, expanded version of a chemical reaction with an expert chemist! Another good idea was for contextualized role plays. Have to agree with that one.

Barriers were explored, and of course value propositions and technical issues ruled the day. Making the case is one problem (a Forrester report was cited that says enterprises do not yet get VWs), and the technical (and cognitive) overhead is another. I wasn’t the only one who mentioned standards.

Another interesting challenge was the lack of experience in designing learning in such environments. It’s still new days, I’ll suggest, and a lot of what’s being done is reproductions of other activities in the new environment (the classic problem: initial uses of new technology mirror old technology). I suggested that we’ve principles (what good learning is and what VW affordances are) that should guide us to new applications without having to have that ‘reproduction’ stage.

I should note that having principles does not preclude new opportunities coming from experimentation, and I laud such initiatives. I’ve opined before that it’s an extension of the principles from Engaging Learning combined with social learning, both areas I’ve experience in, so I’m hoping to find a chance to really get into it, too.

The third question explored what lessons can be learned from social media to enhance appropriate adoption of VWs. Comments included that they needed to be more accessible and reliable, that they’ll take nurturing, and that they’ll have to be affordable.

As always, the lrnchat was lively, fun, and informative. If you haven’t tried one, I encourage to at least take it for a trial run. It’s not for everyone, but some admitted to it being an addiction! ;) You can find out more at the #lrnchat site.

For those who are interested in more about VWs, I want to mention that there will be a virtual world event here in Northern California September 23-24, the 3D Training, Learning, & Collaboration conference. In addition to Koreen, people like Eilif Trondsen, & Tony O’Driscoll (who has a forthcoming book with Karl Kapp on VW learning) will be speaking, and companies like IBM and ThinkBalm are represented, so it should be a good thing. I hope to go (and pointing to it may make that happen, full disclaimer :). If you go, let me know!

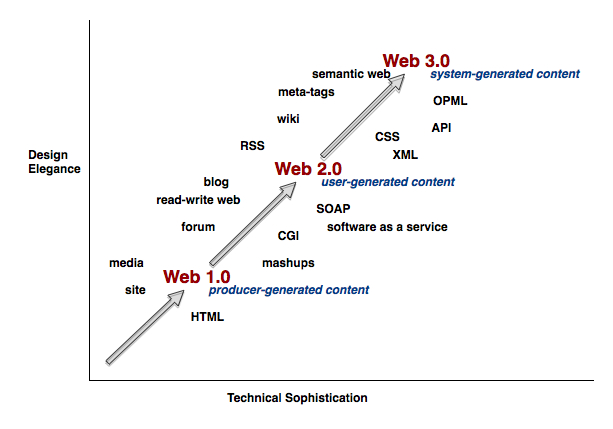

However, if we think about web 2.0 as user-generated content, we can think about 1.0 as producer-generated content. The original web was what people savvy enough (whether tech or biz) could get up on the web. The new web is where it’s easy for anyone to get content up, through blogs, photo-, video-, and slide-sharing sites, and more.

However, if we think about web 2.0 as user-generated content, we can think about 1.0 as producer-generated content. The original web was what people savvy enough (whether tech or biz) could get up on the web. The new web is where it’s easy for anyone to get content up, through blogs, photo-, video-, and slide-sharing sites, and more.

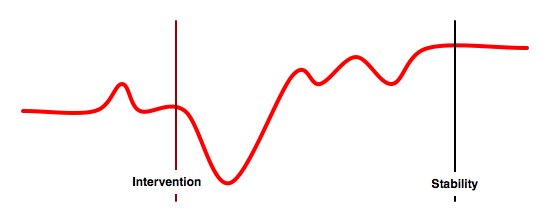

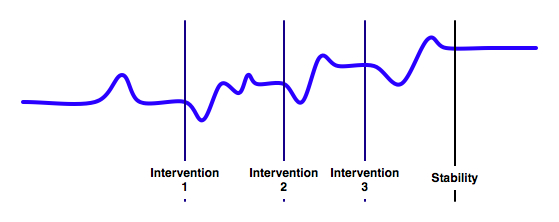

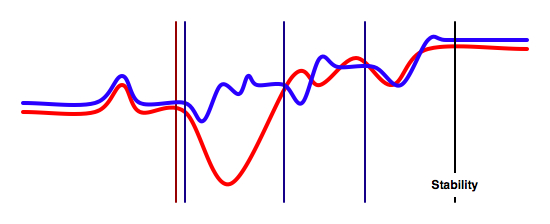

By having smaller introductions that break up the intervention, you decrease the negative effects. The point is to take small steps that make improvements instead of a monolithic change.

By having smaller introductions that break up the intervention, you decrease the negative effects. The point is to take small steps that make improvements instead of a monolithic change. The goal is to maximize improvements while minimizing disruption, and doing so in ways that capitalize on previous efforts and existing infrastructure. To do this really requires understanding how the different components relate: how content models support mobile, how performance support articulates with formal learning and social media, and more. And, of course, understanding the nuances of the underpinning elements and how they are optimized.

The goal is to maximize improvements while minimizing disruption, and doing so in ways that capitalize on previous efforts and existing infrastructure. To do this really requires understanding how the different components relate: how content models support mobile, how performance support articulates with formal learning and social media, and more. And, of course, understanding the nuances of the underpinning elements and how they are optimized.