A couple of weeks ago, I posited what I thought might make the basis for a sustainable degree program. That is, one that prepares students for a world with increasing change. In it I talked about the domains that I thought would provide a solid basis, but I did not talk about something else important. It’s also about the learning to learn and work skills that accompany the foundational knowledge. It’s about ‘meta’ skills.

Meta-skills, like learning to learn and learning to work well (21C skills), can’t be developed on their own. They need to be layered on top of other things. We teach them across other domains, so they’re abstracted and can be reapplied to new problems and situations. Thus, these challenges must reappear across the curriculum.

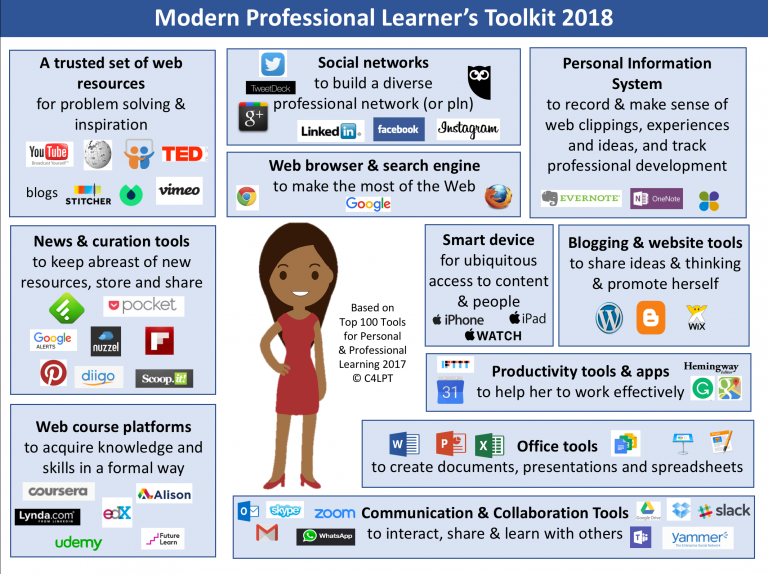

What skills? I think things like the ability to research questions, design and run experiments, communicate results, collaborate, ask and answer questions in ways that work, and more. This includes using technology for these tasks, as well as working with others. Thus, creating spreadsheets, diagrams, and presentations is as much a part as is participating in and leading projects, commenting constructively, and coaching and mentoring.

So, using an application-based pedagogy, there are a series of activities that require application, but they vary in type: research, design, problem-solve, interact, and in output. Then we evaluate those cross-discipline skills as well as the domain knowledge and skills. How was your research process on this interface design project? How well did you communicate your learnings from that experiment on recursion in learning programs?

Curriculum and the pedagogy can be refined, and in fact are interleaved. Then we use technology to serve both as a tool for learners to construct (make) outcomes, and to track their progress. We need to go meta in both!

And this isn’t true just for formal education, and can and should play a role in organizational learning as well. We shouldn’t take our learners’ learning skills for granted, and we can and should track and develop them as well. This isn’t currently supported, but perhaps can be in existing tools, or we may need a new platform. But we should, I suggest. Your thoughts?