My background is in learning technology design, leveraging a deep background (read: Ph.D.) in cognition, and long experience with technology. I have worked as a learning game designer/developer, researcher and academic, project leader on advanced applications, program manager, and more. More recently, I’ve been working with many different types of organizations including not-for-profits, Fortune 500, small-medium enterprises, government, education, and more with workshops, project deliverables, strategic consulting, writing, and more.

This crosses formal learning, mobile learning, serious games, performance support, content systems, social and informal learning, and more. I reckon there’s a benefit to 30+ years of being fortunate enough to be at the cutting edge, and I work hard to maintain currency with developments in learning, technology, and organizational needs.I like to think I’m pretty good at it, and I am for hire. I’ve worked in most of the obvious ways: fixed-fee deliverables when we can define a scope, hourly/daily rates when it’s uncertain, and on a retainer basis to keep my expertise ‘on tap’.

What I have not done, is work on a commission basis. That is, I don’t push someone’s solution on you for a cut of the action. I’ve cut a few such deals in the early days, particularly for long-term clients/partners, but to no avail. And I’m fine with that. In fact, that’s now my stance.

There are reasons for this both principled, and pragmatic. On principle, I want to remain able to say Solution X is the best, as I truly believe it to be true, and not be swayed that Solution Y would offer me some financial reward. I believe my independence is in my clients best interests. This holds true in systems, vendors, individuals, whatever. I want you to be able to trust what I say, and know that it’s coming from my expertise, not some other influence. When you get my expert opinion, it is to your needs alone. And, pragmatically, I’m not a salesperson, it’s not in my nature.

I also don’t design solutions and outsource development. I have trusted partners I can work with, so I don’t need solicitations to show me your skills. I’m sure your team is awesome too, but I don’t want to take the time to vet your abilities, and I certainly wouldn’t represent them without scrutiny. When I have needs, I’ll reach out.

So I welcome hearing from you when you want some guidance on reviewing your processes, assessing or designing your strategy, ramping up your capabilities, considering markets, looking for collateral, and more. This is as true for vendors as other organizations. But don’t expect me to learn about your solutions (particularly for free), and flog them to others. Fair enough? Am I missing something?

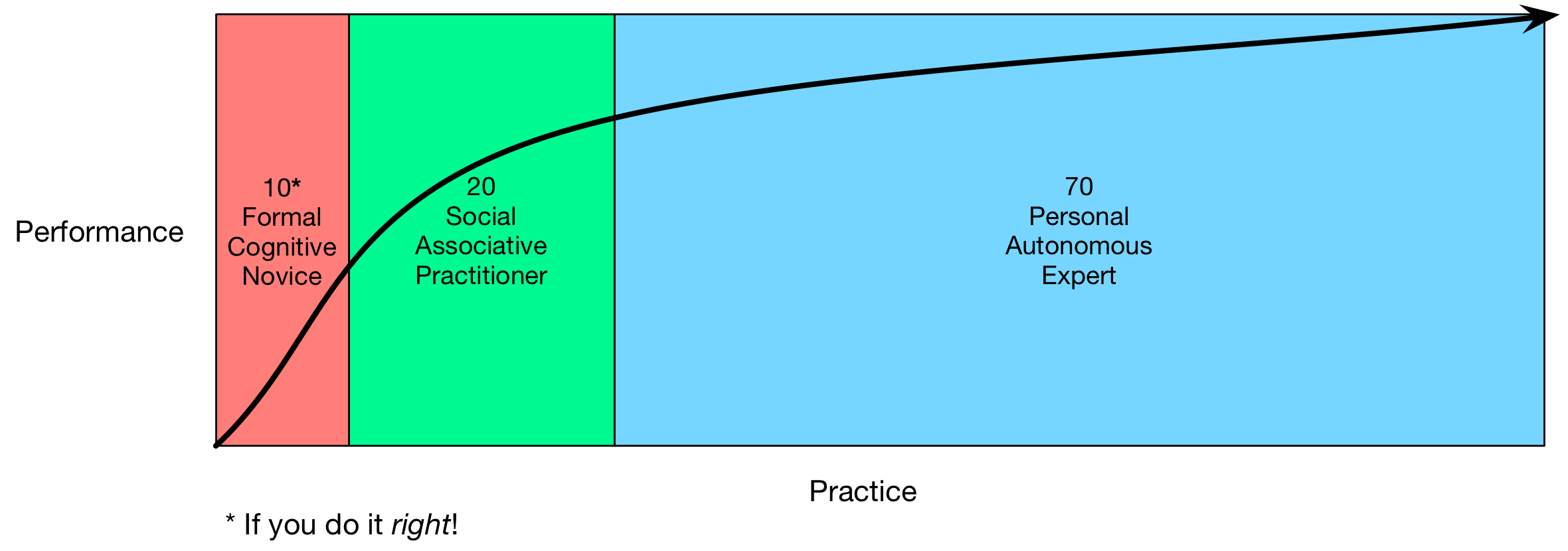

This, to me, maps more closely to 70:20:10, because you can see the formal (10) playing a role to kick off the semantic part of the learning, then coaching and mentoring (the 20) support the integration or association of the skills, and then the 70 (practice, reflection, and

This, to me, maps more closely to 70:20:10, because you can see the formal (10) playing a role to kick off the semantic part of the learning, then coaching and mentoring (the 20) support the integration or association of the skills, and then the 70 (practice, reflection, and