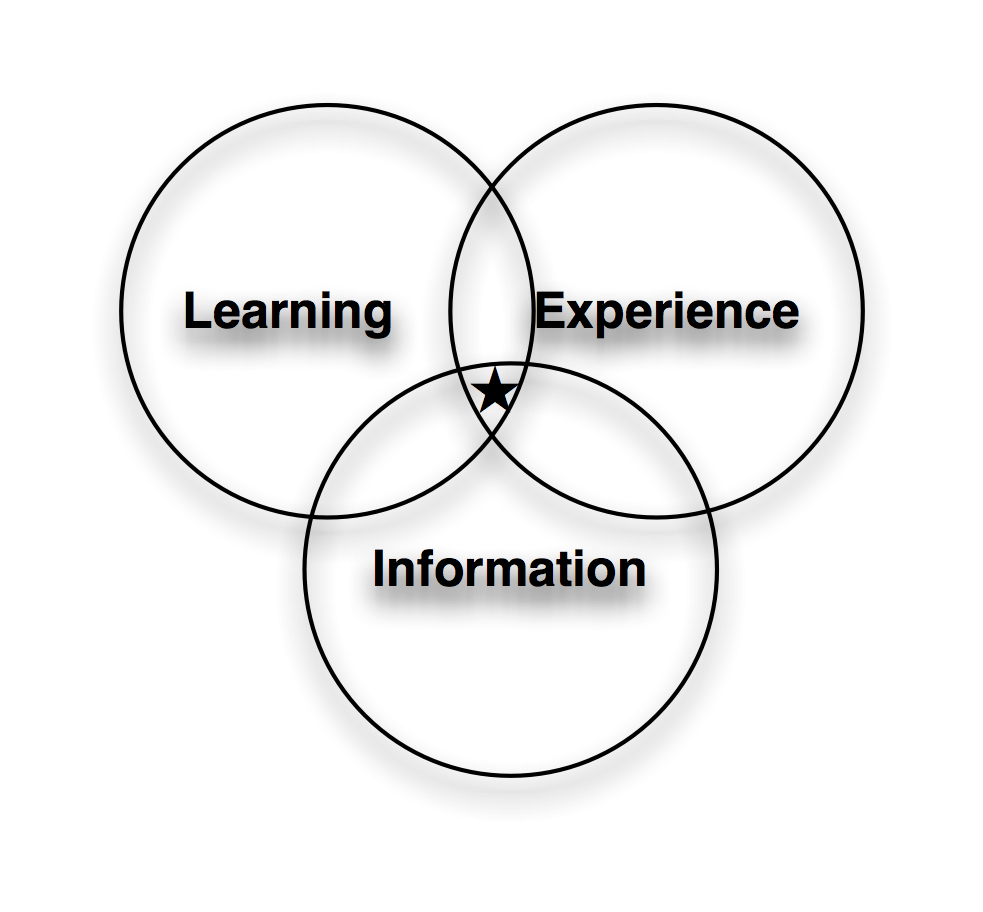

Another way to think about what I was talking about yesterday in revisiting the training department is taking a broader view. I was thinking about it as Learning Design, a view that incorporates instructional design, information design and experience design.

I‘m leery of the term instructional design, as that label has been tarnished with too many cookie cutter examples and rote approaches to make me feel comfortable (see my Broken ID series). However, real instructional design theory (particularly when it‘s cognitive-, social-, and constructivist-aware) is great stuff (e.g. Merrill, Reigeluth, Keller, et al); it‘s just that most of it‘s been neutered in interpretation. The point being, really understanding how people learn is critical. And that includes Cross‘ informal learning. We need to go beyond just the formal courses, and provide ways for people to self-help, and group-help.

I‘m leery of the term instructional design, as that label has been tarnished with too many cookie cutter examples and rote approaches to make me feel comfortable (see my Broken ID series). However, real instructional design theory (particularly when it‘s cognitive-, social-, and constructivist-aware) is great stuff (e.g. Merrill, Reigeluth, Keller, et al); it‘s just that most of it‘s been neutered in interpretation. The point being, really understanding how people learn is critical. And that includes Cross‘ informal learning. We need to go beyond just the formal courses, and provide ways for people to self-help, and group-help.

However, it‘s not enough. There‘s also understanding information design. Now, instructional designers who really know what they‘re doing will say, yes, we take a step back and look at the larger picture, and sometimes it‘s job aids, not courses. But I mean more, here. I‘m talking about, when you do sites, job aids, or more, including the information architecture, information mapping, visual design, and more, to really communicate, and support the need to navigate. I see reasonable instructional design undone by bad interface design (and, of course, vice-versa).

Now, how much would you pay for that? But wait, there‘s more! A third component is the experience design. That is, viewing it not from a skill-transferral perspective, but instead from the emotional view. Is the learner engaged, motivated, challenged, and left leaving fulfilled? I reckon that‘s largely ignored, yet myriad evidence is pointing us to the realization that the emotional connection matters.

We want to integrate the above. Putting a different spin on it, it‘s about the intersection of the cognitive, affective, conative, and social components of facilitating organizational performance. We want the least we can to achieve that, and we want to support working alone and together.

There‘s both a top-down and bottom-up component to this. At the bottom, we‘re analyzing how to meet learner needs, whether it‘s fully wrapped with motivation, or just the necessary information, or providing the opportunity to work with others to answer the question. It‘s about infusing our design approaches with a richer picture, respecting our learner‘s time, interests, and needs.

At the top, however, it‘s looking at an organizational structure that supports people and leverages technology to optimize the ability of the individuals and groups to execute against the vision and mission. From this perspective, it‘s about learning/performance, technology, and business.

And it‘s likely not something you can, or should, do on your own. It‘s too hard to be objective when you‘re in the middle of it, and the breadth of knowledge to be brought to bear is far-reaching. As I said yesterday, what I reckon is needed is a major revisit of the organizational approach to learning. With partners we‘ve been seeing it, and doing it, but we reckon there‘s more that needs to be done. Are you ready to step up to the plate and redesign your learning?