I was recently pinged about a new virtual world, a ‘metaverse‘ inspired new place for L&D. It looked like a lot of previous efforts! I admit I was underwhelmed, and I think sharing why might be worthwhile. So here are some meta-reflections.

I was recently pinged about a new virtual world, a ‘metaverse‘ inspired new place for L&D. It looked like a lot of previous efforts! I admit I was underwhelmed, and I think sharing why might be worthwhile. So here are some meta-reflections.

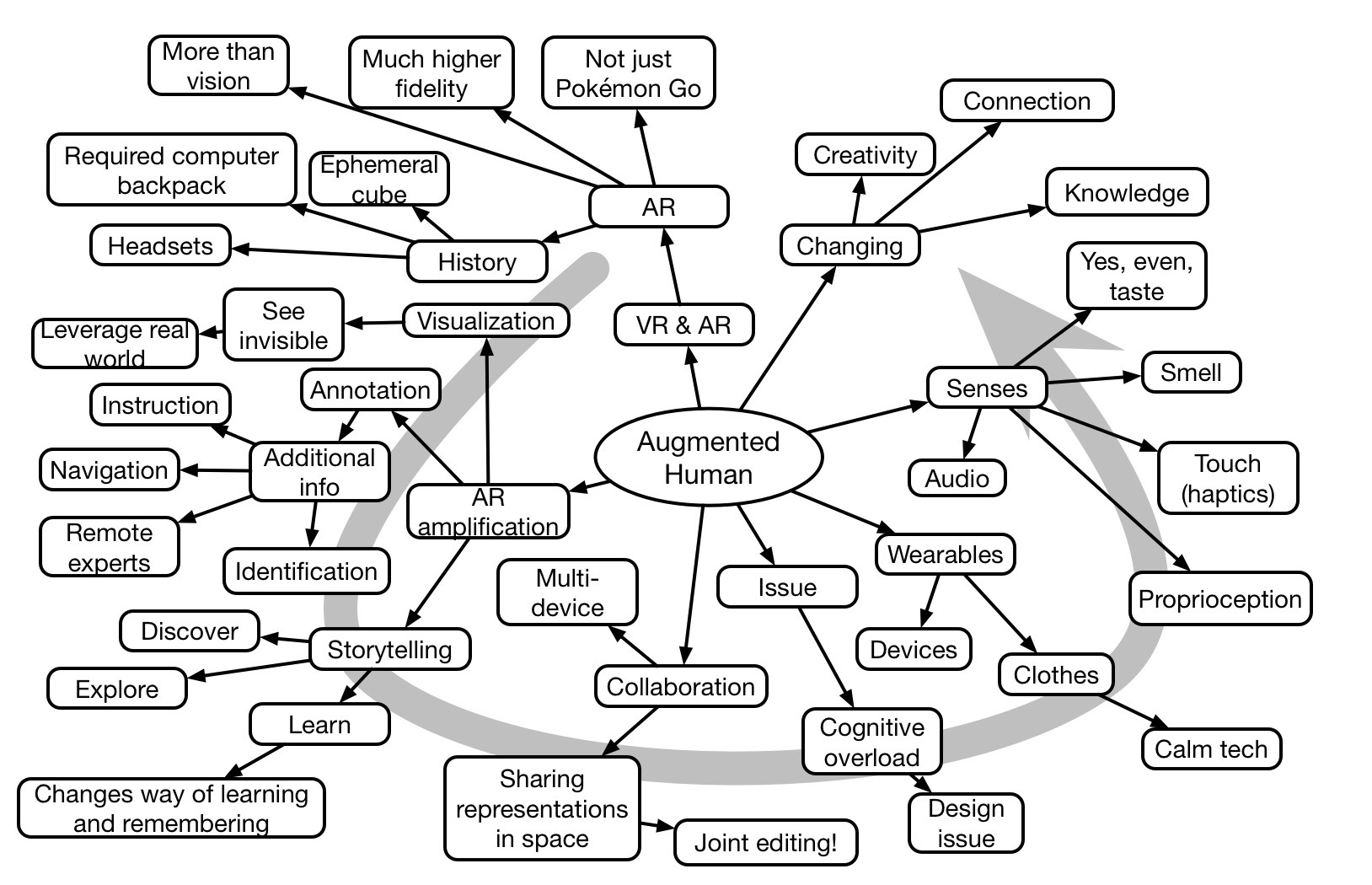

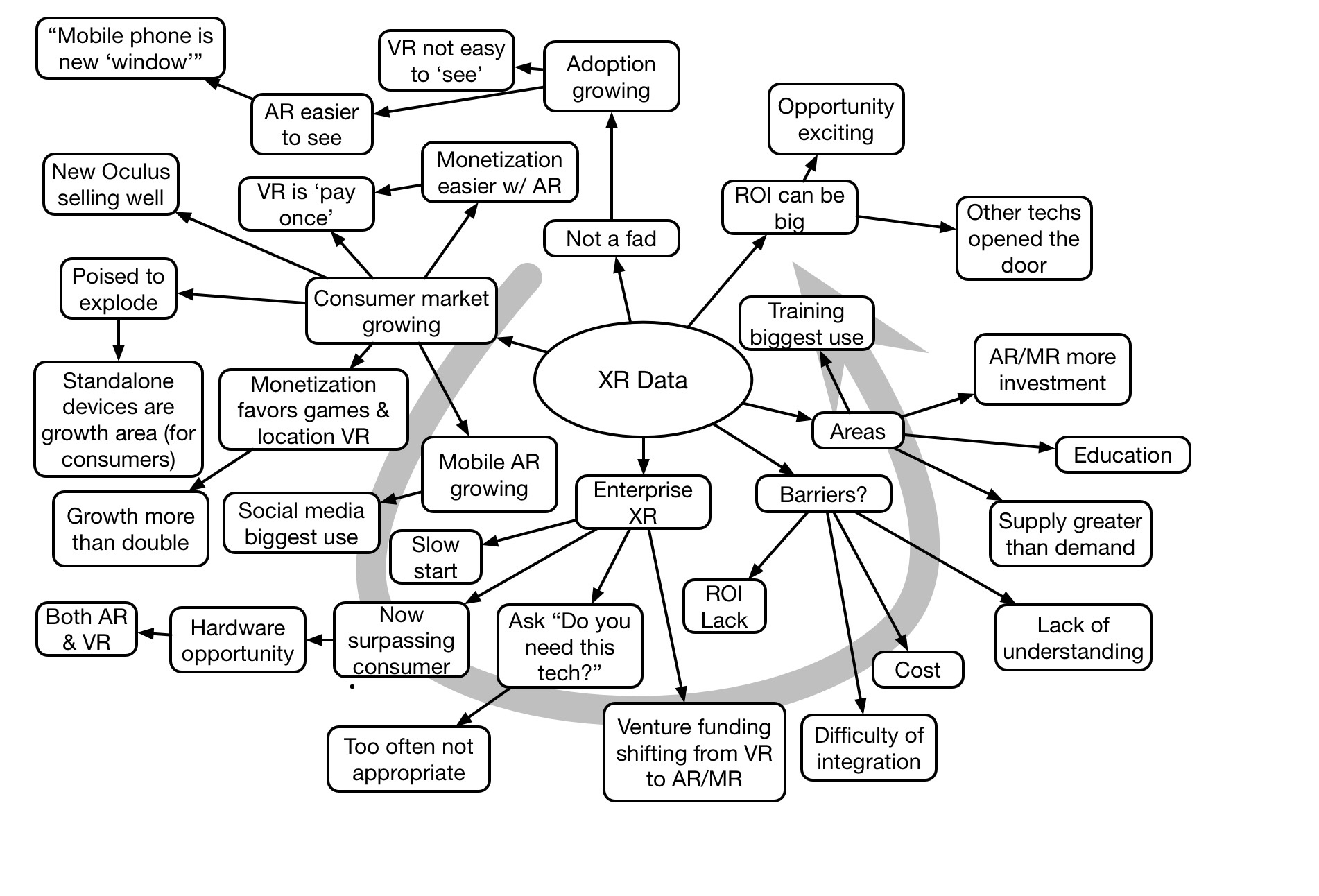

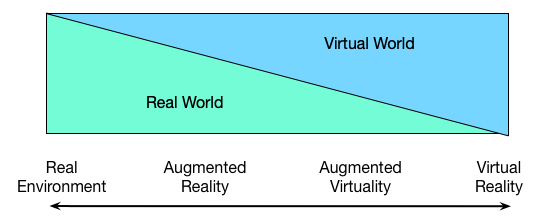

I’ve written before on virtual worlds. In short, I think that when you need to be social and 3D, they make sense. At other times, there’s a lot of overhead for them to be useful that can be met in other ways. Further, to me, the metaverse really is just another virtual world. Your mileage may vary, of course.

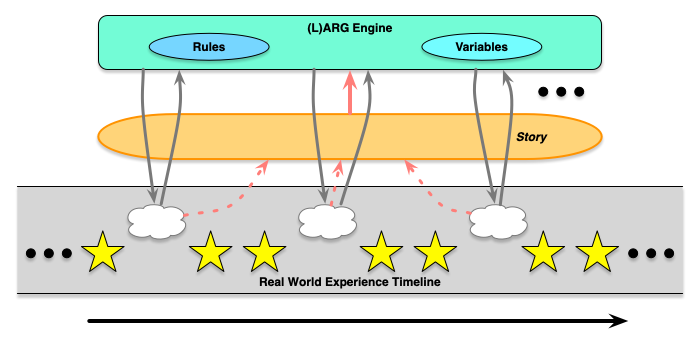

This new virtual world had, like many others, the means to navigate in 3D, and to put information around. The demo they had was a virtual museum. Which, I presume, is a nice alternative to trying to get to a particular location. On the other hand, if it’s all digital, is this the best way to do it? Why navigate around in 3D? Why not treat it as an infographic, and work in 2D, leading people through the story? What did 3D add? Not much, that I could see.

My take has, and continues to be, as they say, “horses for courses”. That is, use the right tool for the job. I complained about watching a powerpoint presentation in Second Life (rightly so). Sure, I get that we tend to use new technologies in old ways first until we get on top of the new capabilities. However, I also argue that we can short-circuit this process if we look at core affordances.

The followup message was that this was the future of L&D, and we’d get away from slide decks and Zoom calls, and do it all in this virtual world. I deeply desire this not to be true! My take is that slide decks, Zoom, virtual worlds, and more all have a place. It’s a further instance of get the design right first, then figure out how to implement it. I want an ecosystem of resources.

Sure, I get that such a meta verse could be an integrating environment. However, do you really want to do all your work in a virtual world? Some things you can’t, I reckon, machining materials, for instance. Moreover, we have benefits from being out in the world. There are other issues as well. You might be better able to deal with diversity, etc, in a virtual world, but it’ll disadvantage some folks. Better, maybe, to address the structural problems rather than try to cover them over?

As always, my takeaway is use technology to implement better approaches, don’t meld your approaches to your tech. Those are, at least, my meta-reflections. What are yours?