In working on something, I’m looking at the likely steps people take. Of course, I’m listing them from easiest to most useful (with the hope that folks understand they should take the latter). However, it’s making me think that, too often, people are looking at the easy answer, not the most accurate one. Because they really don’t know the problem. When does the easy answer make sense? Are we letting ourselves off the hook too much?

So, for instance, in learning we really should do analysis when someone asks for something. “We need a course on X.” “Ok, what tells you that you need this, and how will we know when it’s worked?” In a quick family convo, we established that this sort of un-analytical request is made all the time:

- “Why isn’t my plant blooming?” (It’s not the season.)

- “Fix this code.” (The input’s broken, not the code.)

- …

Yet, people actually don’t do this up-front analysis. Why? It’s harder, it takes more time, it slows things down, it costs more. Besides, we know what the problem is.

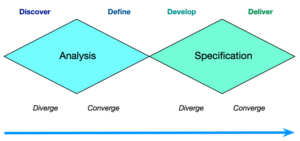

Except, we don’t know what the problem is. Too often, the question or request is making some assumptions about the state of the world that may not be true. It may be the right answer, but it may not. Ensuring that you’ve identified the problem correctly is the first part of the design process, and you should diverge on exploration before you converge on a solution. That’s the double diamond, where you first explore the problem, before you explore a solution.

Except, we don’t know what the problem is. Too often, the question or request is making some assumptions about the state of the world that may not be true. It may be the right answer, but it may not. Ensuring that you’ve identified the problem correctly is the first part of the design process, and you should diverge on exploration before you converge on a solution. That’s the double diamond, where you first explore the problem, before you explore a solution.

Perhaps counter-intuitively, this is more efficient. Why? Because you’re not expending resources solving the wrong problem. Are you sure you’ve gotten it right? How do you know when to take the easier path? If you know the answer you need, you’re better equipped to choose the level of solution you need. If you don’t know the question, however, and make assumptions about the root cause, you can go off the rails. And, end up spending effort you didn’t need to.

Look, I live in the real world. I have to take shortcuts (heck, I’m lazy ;). And I do. However, I like to do that when I know the answer, and know that the outcome is good enough to meet the need. I’ll go for the easy answer, if I know it’ll solve the problem well enough. But I can’t if I don’t know the question or problem, and just assume. And we know what happens when we ass-u-me.

In our field of learning design (aka instructional design), it’s too frequently the case that folks don’t actually know the underlying learning

In our field of learning design (aka instructional design), it’s too frequently the case that folks don’t actually know the underlying learning