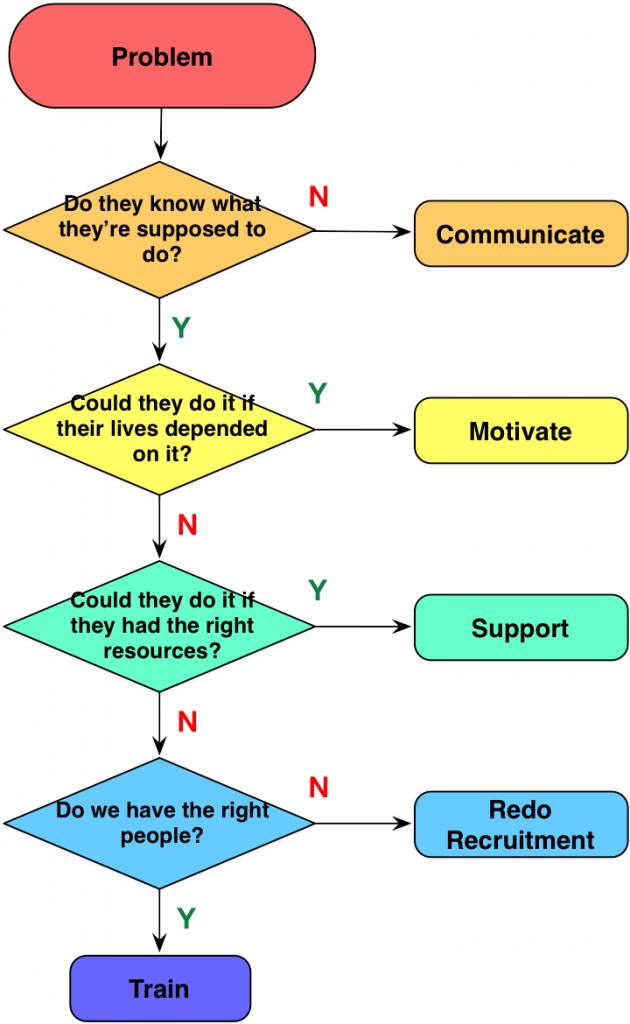

It appears that, too often, people are building courses when they don’t need to (or, more importantly, shouldn’t). I realize that there are pressures to make a course when one is requested, including expectations and familiarity, but really, you should be doing some initial thinking about what makes sense. So here’s a rough guide about the thinking you should do before you course.

You begin with a performance problem. Something’s not right: calls take too long, sales success rate is too low, there’re too many errors in manufacturing. So it must need training, right? Er, no. There’s this thing that’s called ‘performance consulting‘ that talks about identifying the gaps that could be preventing the desirable outcomes, and they’re not all about gaps that training meets. So we need to triage, and see what’s broken and what’s the priority.

You begin with a performance problem. Something’s not right: calls take too long, sales success rate is too low, there’re too many errors in manufacturing. So it must need training, right? Er, no. There’s this thing that’s called ‘performance consulting‘ that talks about identifying the gaps that could be preventing the desirable outcomes, and they’re not all about gaps that training meets. So we need to triage, and see what’s broken and what’s the priority.

To start, people can simply not know what they’re supposed to do. That may seem obvious, but it can in fact be the case. Thus, there’s a need to communicate. Note that this and all of these are more complex than just ‘communicate’. There are the issues about who needs to communicate, and when, and to whom, etc. But it’s not (at least initially) a training problem.

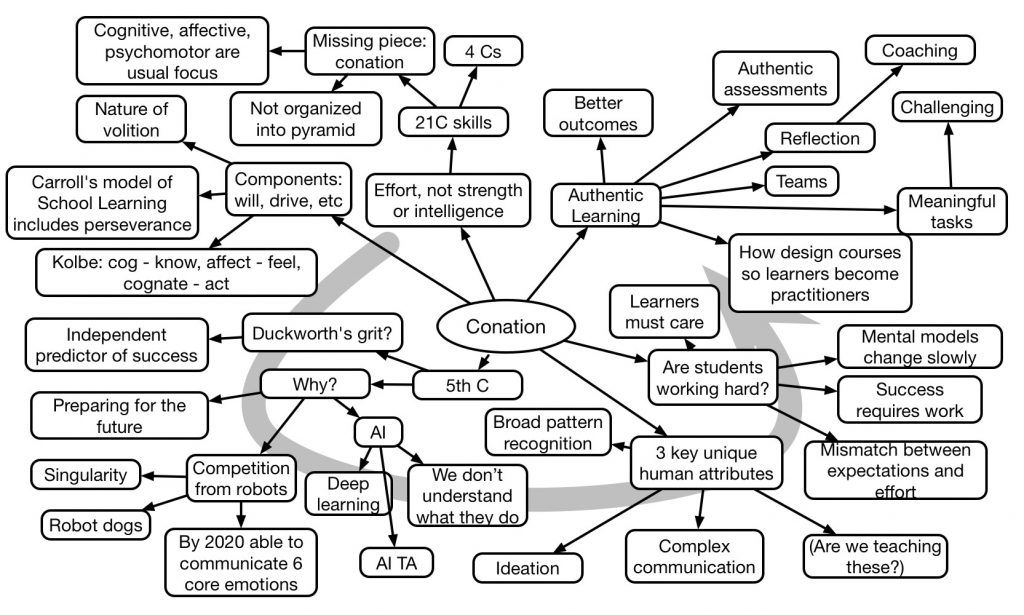

If they do know, and could do it but aren’t, the problem isn’t going to be solved by training. As someone once put it “if they could do it if their life depended on it”, then there’s something else going on. If they’re not following safety procedures because they’re too onerous, a course on it isn’t going to fix it. You need to address their motivation.

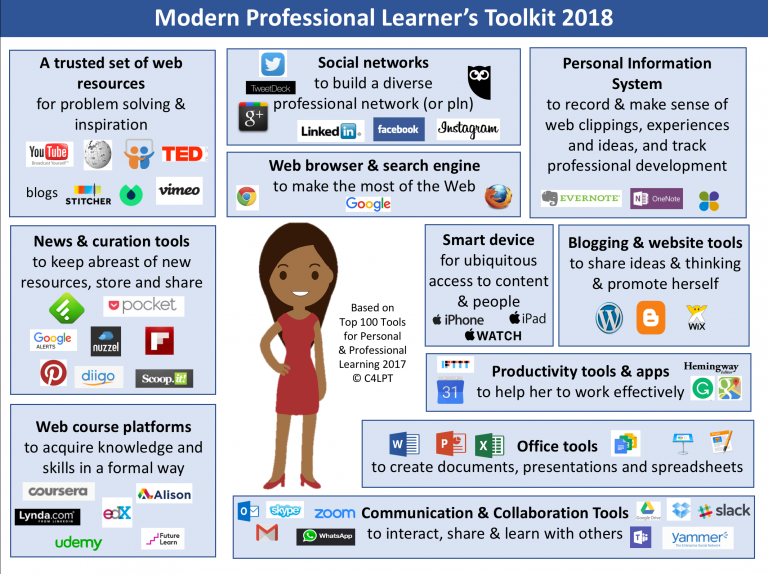

Now, if they can’t do it, then could they do it if they had the right tools, or more people, or more time? In other words, is it a resource problem? And, in one way I like to think about it: can we put the solution in the world, instead of in the head? Will lookup tables, checklists, step-by-step guides or videos solve the problem? Or even connections to other folks! (There are times when it doesn’t make sense to course or even job-aid; e.g. if it’s changing too fast, or too unique, or…)

And, of course, if you don’t have the right people, training still may not work. If they need to meet certain criteria, but don’t, training won’t solve it. Training can’t fix color-blindness or lack of height, for instance.

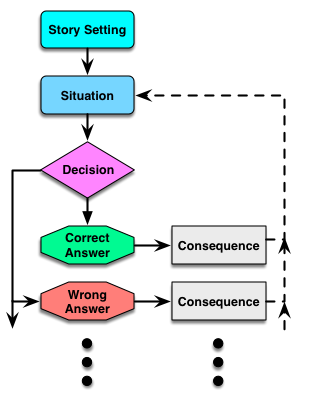

Finally, if the prior solutions won’t solve it, and there’s a serious skill gap, then it’s time for training. And not just knowledge dump, of course, but models and examples and meaningful (and spaced) practice.

Again, these are all abbreviated, and this is oversimplified. There’s more depth to be unpacked, so this is just a surface level way to represent that a course isn’t always the solution. But before you course, consider the other solutions. Please.