Among the things I’ve been doing lately is talking to folks who’ve got content and are thinking about the opportunities beyond books. This is a good thing, but I think it’s time to think even further. Because, frankly, the ebook formats are still too limited.

It’s no longer about the content, it’s about the experience. Just putting your content onto the web or digital devices isn’t a learning solution, it’s an information solution. So I’m suggesting transcending putting your content online for digital, and starting to think about the opportunities to leverage what technology can do. It started with those companion sites, with digital images, videos, audios, and interactives that accompany textbooks, but the opportunities go further.

We can now embed the digital media within ebooks. Why ebooks, not on the web? I think it’s primarily about the ergonomics. I just find it challenging to read on screen. I want to curl up with a book, getting comfortable.

However, we can’t quite do what I want with ebooks. Yes, we can put in richer images, digital audio, and video. The interactives part is still a barrier, however. The ebook standards don’t yet support it, though they could. Apple’s expanded the ePub format with the ability to do quick knowledge checks (e.g. true/false or multiple choice questions). There’s nothing wrong with this, as far as it goes, but I want to go further.

I know a few, and sure that there are more than a few, organizations that are experimenting with a new specification for ePub that supports richer interaction, more specifically pretty much anything you can do with HTML 5. This is cool, and potentially really important.

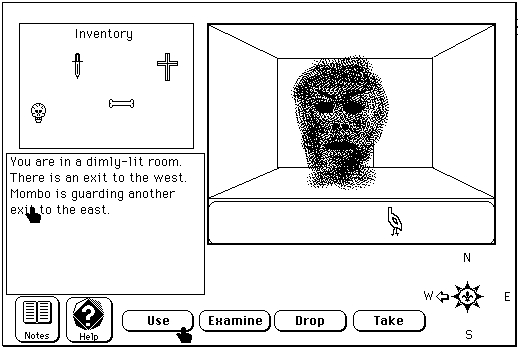

Let me give you a mental vision of what could be on tap. There’s an app for iOS and Android called Imaginary Range. It’s an interesting hybrid between a graphic novel and a game. You read through several pages of story, and then there’s an embedded game you play that’s tied to, and advances, the story.

Imagine putting that into play for learning: you read a graphic novel that’s about something interesting and/or important, and then there’s a simulation game embedded where you have to practice the skills. While there’s still the problem with a limited interpretation of what’s presented (ala the non-connectionist MOOCs), in well-defined domains these could be rich. Wrapping a dialog capability around the ebook, which is another interesting opportunity, only adds to the learning opportunity.

I’ll admit that I think this is not really mobile in the sense of running on a pocketable, but instead it’s a tablet proposition. Still, I think there’s real value to be found.