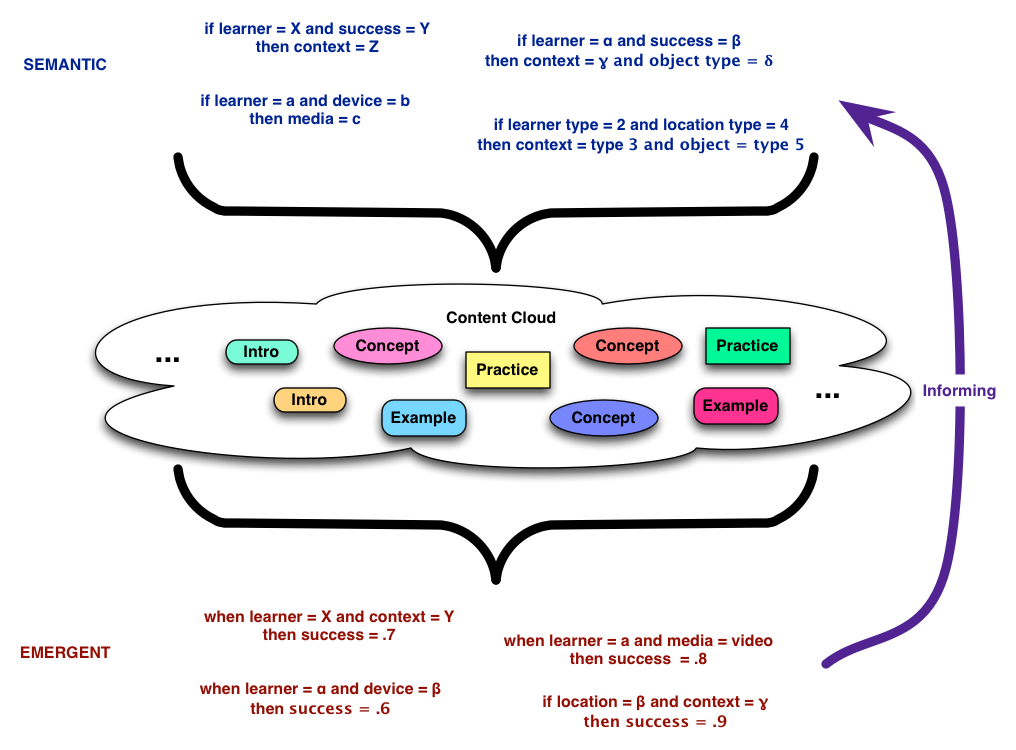

Last week I was on a panel about the API previously known as Tin Can at #DevLearn, and some thoughts crystallized. Touted as the successor to SCORM, it’s ridiculously simple: Subject Verb Object: e.g. “I did this”, such as ‘John Doe read Engaging Learning’ but also ‘Jane Doe took this picture’. And this has interesting implications.

First, the API itself is very simple, and while it can be useful on it’s own, it’ll be really useful when there’re tools around it. It’s just a foundation upon which things can be done. There’ll need to be places to record these actions, and ones to pull together sequences of recommendations for learning paths, and more. You’ll want to build portfolios of what you’ve done (not just what content you’ve touched).

But it’s about more than learning. These can cross accessing performance support resources, actions in social media systems, and more. This person touched that resource. That person edited this file. This other person commented.

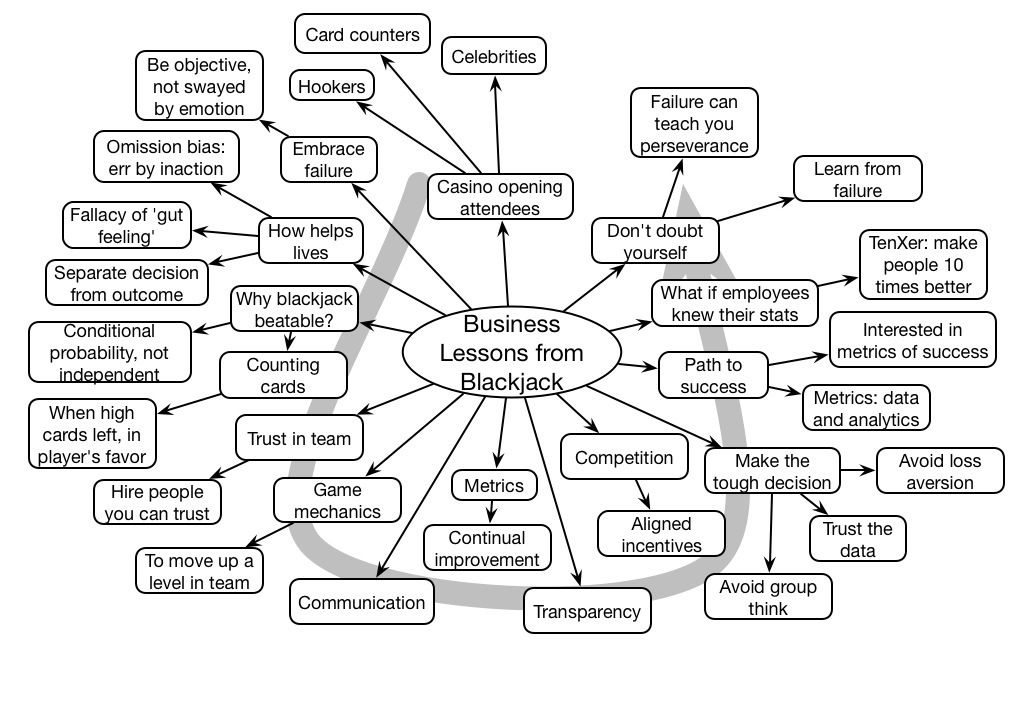

One big interesting opportunity is to be able to start mining these. We can start looking at evidence of what folks did and finding good and bad outcomes. It’s a consistent basis for big data and analytics. It’s also a basis to start customizing: if the people who touched this resource were better able to solve problem X, other people with that problem maybe should also touch it. If they’ve already tried X and Y, we can next recommend Z. Personalization/customization.

An audience member asked what they should take back to their org, and who needed to know what. My short recommendations:

Developers need to start thinking about instrumenting everything. Everything people touch should report out on their activity. And then start aggregating this data. Mobile, systems, any technology touch. People can self report, but it’s better to the extent that it’s automated.

Managers need to recognize that they’re going to have very interesting opportunities to start tracking and mining information as a basis to start understanding what’s happening. Coupled with rich other models, like of content (hence the need for a content strategy), tasks, learners, we can start doing more things by rules.

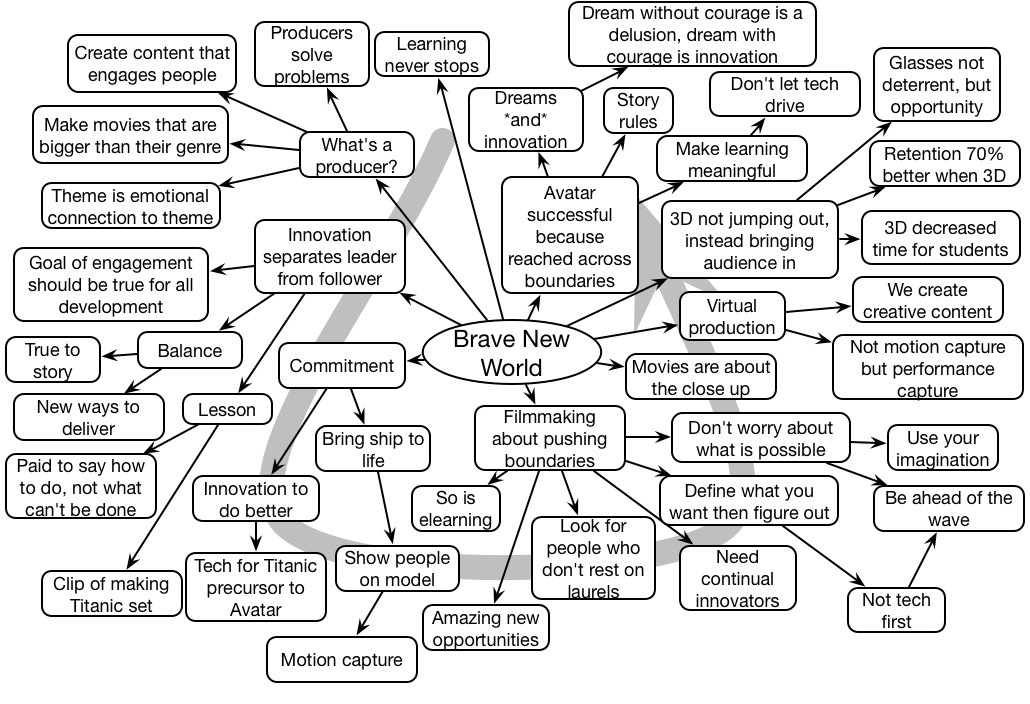

And designers need to realize, and then take advantage of, a richer suite of options for learning experiences. Have folks take a photo of an example of X. You can ask them to discuss Y. Have them collaborate to develop a Z. You could even send your learners out to do a flash mob ;).

Learning is not about content, it’s about experience, and now we have ways to talk about it and track it. It’s just a foundation, just a standard, just plumbing, just a start, but valuable as all that.