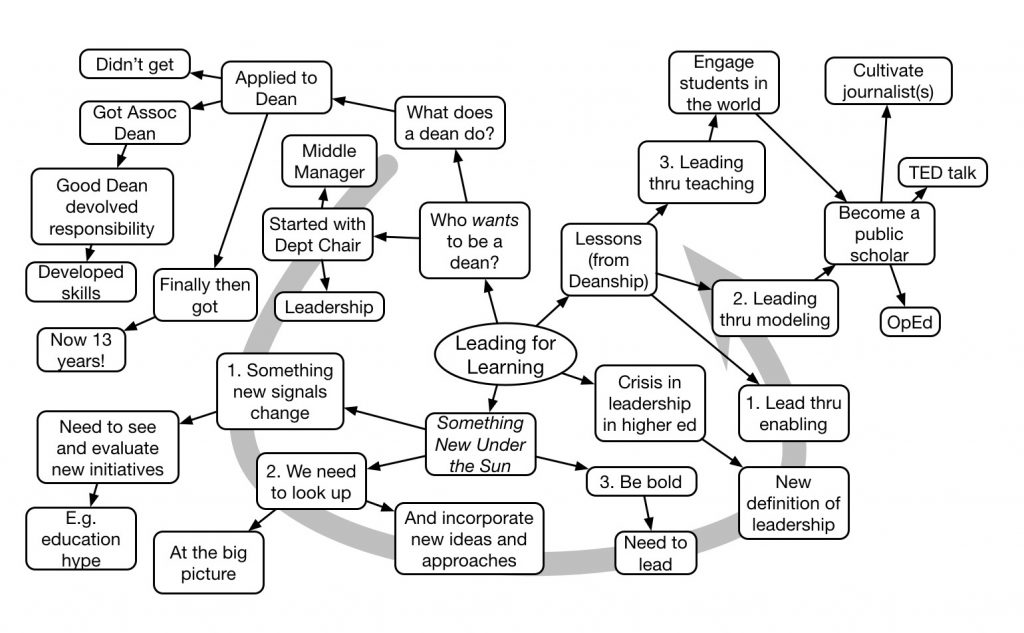

Marcy Driscoll kicked off the Association for Educational Communications and Technology’s annual conference with a thoughtful keynote on leadership. She used her experience as a Dean to explore possibilities and suggestions for what this could and should mean.

Revisiting 70:20:10

Last week, the Debunker Club (led by Will Thalheimer) held a twitter debate on 70:20:10 (the tweet stream can be downloaded if you’re curious). In ‘attendance’ were two of the major proponents of 70:20:10, Charles Jennings and Jos Arets. I joined Will as a moderator, but he did the heavy lifting of organizing the event and queueing up questions. And there were some insights from the conversations and my own reflections.

To start, 70:20:10 is a framework, it’s not a specific ratio but a guide to thinking about the whole picture of developing organizational solutions to performance problems. In the book by Jos & Charles, along with their colleague Vivian Heijnen, on the topic, there’s a whole methodology that encompasses 5 roles and 28 steps. The approach goes from a problem to a solution that incorporates tools, formal learning, coaching, and more.

To start, 70:20:10 is a framework, it’s not a specific ratio but a guide to thinking about the whole picture of developing organizational solutions to performance problems. In the book by Jos & Charles, along with their colleague Vivian Heijnen, on the topic, there’s a whole methodology that encompasses 5 roles and 28 steps. The approach goes from a problem to a solution that incorporates tools, formal learning, coaching, and more.

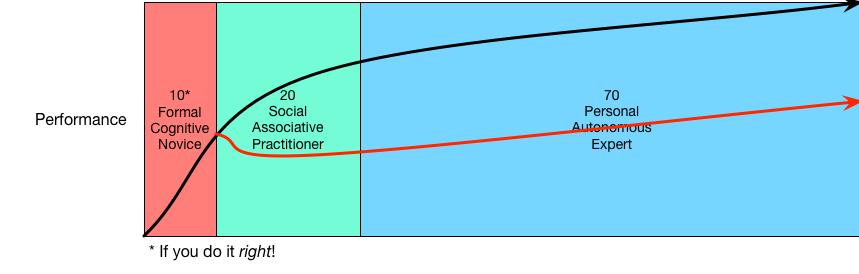

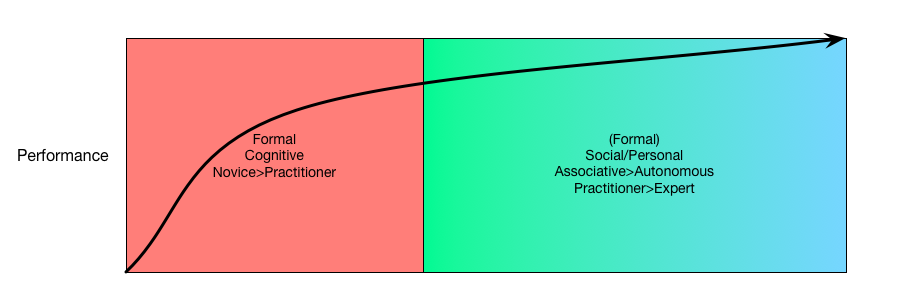

The numbers come from a study on leaders, who felt that 10% of what they learned to do their jobs came from formal learning, 20% came from working with others and coaching, and 70% they learned from trying and reflecting on the outcomes. The framework’s role is to help people recognize this, and not leave the 70 and 20 to chance. The goal is to help people along the learning curve, not just leave them to chance after the ‘event’.

First, my impression was that a lot of people like that the 70:20:10 framework provides a push beyond the event model of ‘the course’. Also, a number struggle with the numbers as a brand, because they feel that the numbers are misleading. And some folks clearly believe that good instructional design should include the social and the activity, so the framework is a distraction. A colleague felt that there were also some who feel that formal learning is a waste of time, but I don’t think that many truly ignore the 10, they just want it in the proper perspective (and I could be wrong).

Now, there are times when the ratio changes. In roles where the consequences of failure are drastic (read: aerospace, medical, military), you tend to have a lot more formal. It can go quite a ways up the learning curve. Ideally, we’d do this for every situation, but in real life we have to strike a balance. If we can do the job right in the 10, and then similarly ensure good practices around the 20 and the 70, we’ll get people up the curve.

Now, there are times when the ratio changes. In roles where the consequences of failure are drastic (read: aerospace, medical, military), you tend to have a lot more formal. It can go quite a ways up the learning curve. Ideally, we’d do this for every situation, but in real life we have to strike a balance. If we can do the job right in the 10, and then similarly ensure good practices around the 20 and the 70, we’ll get people up the curve.

Another issue, for me, is that 70:20:10 not only provides a push towards thinking of the whole picture, but like Kirkpatrick (and perhaps better) it serves as a design tool. You should start from what the situation looks like at the end and figure out what can be in the world and what has to be in the head, and then go backwards. You then design your tools, and then your training, and 70:20:10 suggests including coaching, etc. But starting with the 70 is one of the messages.

So, I like the realization of 70:20:10 (except typing all those redundant zeros and colons, I often refer to it as 721 ;): the focus on designing the full solution, including tools and coaching and more. I don’t see 70:20:10 being the full solution, as the element of continual innovation and a learning culture are separate, but it’s a good solution for the performance part of the picture, and the specific parts of the development.

Rules for AI

After my presentation in Shanghai on AI for L&D, there were a number of conversations that ensued, and led to some reflections. I’m boiling them down here to a few rules that seem to make sense going forward.

- Don’t worry about AI overlords. At least, not yet ;). Rodney Brooks wrote a really nice article talking about why we might be fearing AI, and why we shouldn’t. In it, he cited Amara’s Law: we tend to overestimate technology in the short-term, and underestimate the impact in the long term. I think we’re in the short-term of AI, and while it’s easy to extrapolate from smart behavior in a limited domain to similar behavior in another (and sensible for humans), it turns out to be hard to get computers to do so.

- Do be concerned about how AI is being used. AI can be used for ill or good, and we should be concerned about the human impact. I realize that a focus on short-term returns might suggest replacing people when possible. And anything rote enough possibly should be replaced, since it’s a sad use of human ability. Still, there are strong reasons to consider the impact on the people being affected, not least humanitarian, but also practical. Which leads to:

- Don’t have AI without human oversight (at least in most cases). As stated above in 1, AI doesn’t generalize well. While it can be trained to work within the scope you describe, it will suffer at the boundary conditions, and any ambiguous or unique situations. It may well make a better judgment in those cases, but it also may not. In most cases, it will be best to have an external review process for all decisions being made, or at least ones at the periphery. Because:

- Your AI is only as good as it’s data set and/or it’s algorithms. Much of machine learning essentially runs on historical datasets. And historical datasets can have historical biases in them. For instance, if you were to look at building a career counselor based upon what’s been done in many examples across schools, you might find that women were being steered away from math-intensive careers. Similarly, if you’re using a mismatched algorithm (as happens often in statistics, for example), you could be biasing your results.

- Design as if AI means Augmented Intelligence, not Artificial Intelligence (perhaps an extension of 3). There are things humans do well, and things that computers do well. AI is an attempt to address the intersection, but if our goal is (as it should be) to get the best outcome, it’s likely to be a hybrid of the two. Yes, automate what can and should be automated, but first consider what the best total solution would be, and then if it’s ok to just use the AI do so. But don’t assume so.

- AI on top of a bad system is a bad system. This is, perhaps, a corollary to 4, but it goes further. So, for instance, if you create a really intriguing simulated avatar for practicing soft skills, but you’re still not really providing a good model to guide performance, and good examples, you’re either requiring considerable more practice or risking an inappropriate emergent model. AI is not a panacea, but instead a tool in designing solutions (see 5). If the rest of the system has flaws, so will the resulting solution.

This is by no means a full set, nor a completely independent one. But it does reflect some principles that emerged from my interactions around some applications and discussions with people. I welcome your extensions, amendments, or even contrary views!

Addressing Changes

Yesterday, I listed some of the major changes that L&D needs to acknowledge. What we need now is to look at the top steps that need to be taken. As serious practitioners in a potentially valuable field, we need to adapt to the changing environment as much as we need to assist our charges to do so. So what’s involved?

We need to get a grasp on technology affordances. We don’t need to that the latest technology exists, whether AI, AR, or VR. Instead, we have to understand what they mean in the context of our brains. What key capabilities are brought? Can VR go beyond entertainment to help us learn better? How can AI partner with us? If we can make practical use of AR, what would we do with it?

In conjunction, we need to understand the realities about us. We need to take ownership and have a suitable background in how people really think, work, and learn. Further, we need to recognize that they’re all tied together, not separate things. So, for instance, we learn as we work, we think as we learn, etc.

For example, we need to understand situated and distributed cognition. That is, we need to grasp that we’re not formal logical thinkers, but instead very context dependent, and that our thinking is across our tools. As a consequence, we need to design solutions that recognize our individual situations, and leverage technology as an augment. So we want to design human/computer system solutions to problems, not just human or system solutions.

We also need to understand cultural elements. We work better when we are given meaningful work, freedom to pursue those goals, and get the necessary support to succeed. This is not micromanagement, but instead, is leadership and coaching. We also need an environment where it’s safe, expected even, to experiment and even to make mistakes.

We also need to understand that we work better (read: produce better results), when we work together in particular ways. Where we understand that we should allow individual thought first, but then pool those ideas. And we need to show our work and the underlying thinking. Moreover, again, it has to be safe to do so!

And, these are all tied together into a systemic approach! It can’t be piecemeal, because working together and out loud can’t be divorced from the technology used to enable these capabilities. And giving people meaningful work and not letting them work together, or vice-versa, just won’t achieve the necessary critical mass.

Finally, we also need to do this in alignment with the business. And, lets be clear, in ways that can be measured! We need to be understanding what are the critical performance needs of the organization, and demonstrate that we’re impacting them in the ways above.

This can be done, and it will be the hallmark of successful organization. We’re already seeing a wide variety of converging evidence that these changes lead to success. The question is, are you going to lead your organization forward into the future, or keep your head down and do what you’ve always done?

Acknowledging Changes

There are a serious number of changes that are affecting organizations. We’re seeing changes in the information flow, in technology, and in what we know about ourselves. Importantly, these are things that L&D needs to acknowledge and respond to. What are these changes?

It’s old news that things are happening faster. We’re being overwhelmed with information, and that rate is accelerating. On the other hand, our tools to manage the information flow are also advancing.

Which is the second topic. We’re getting more powerful technology. We can create systems that do tasks that used to be limited to humans. They can also partner with us, providing information based upon who we are, what we’re doing, and what else is going on.

And there are increasing demands for accountability (and transparency). Your actions should be justified. What are you doing, why, and what effect is it having? If you can’t answer these questions, you’re going to be looking for a job.

Most importantly, we’ve learned quite a bit about ourselves that is contrary to many pre-existing beliefs. Specifically ones that influence organizational approaches. Our myths about how we think, work, and learn are holding us back from achieving optimal outcomes.

For one, there’s a persistent belief that our thinking is in our heads. Yet research shows that our thinking is distributed across our tools. We use external representations to capture at least part of our thinking, and access information that we can’t keep in our heads effectively. Yet we seem to depend on courses to put it in the head instead of tools to put it in the world.

Our thinking is also distributed across others. “You’re no longer what you know, but who you know” is a new mantra. So is “the room is smarter than the smartest person in the room” (with the caveat: if you manage the process right ;). Informal and social learning is the work. Yet we still act as if we believe that people should solve problems independently.

And we also act as if how we learn is by information dump. Add a quiz, so we know they can recognize the right answer if they see it, and they’ve learned! Er, no. Science tells us that this is perhaps the worst thing we could do to facilitate learning.

In short, our practices are out of date. We’re using patch-it (or ignore-it) solutions to systemic issues. We address simple things as if they’re not all connected. It’s time to get on top of what’s known, and then act accordingly. Are you ready to join the 21st century?

Stay Curious

One of my ongoing recommendations to people grew out of a toss-off line, playing off an advertisement. Someone asked about a strategy for continuing to learn (if memory serves), and I quipped “stay curious, my friends”. However, as I ponder it, I think more and more that such an approach is key.

I was thinking of this trend the other day as “intellectual restlessness”. What I’m talking about is being intrigued by things you don’t understand that have persisted or recently crossed your awareness, and pursuing it. It’s not just saying “how interesting”, but recognizing connections, and pondering how it could change what you do. Even to the point of actually changing!

It also would include pointing interesting things to other people who would benefit. This doesn’t always have to happen, but in the spirit of cooperation (in the Jarche sense), we could and should contribute, curate, when we can. And, ideally, leaving trails of your explorations that others can benefit from. Writings, diagrams, videos, what have you, helps yourself as well as others.

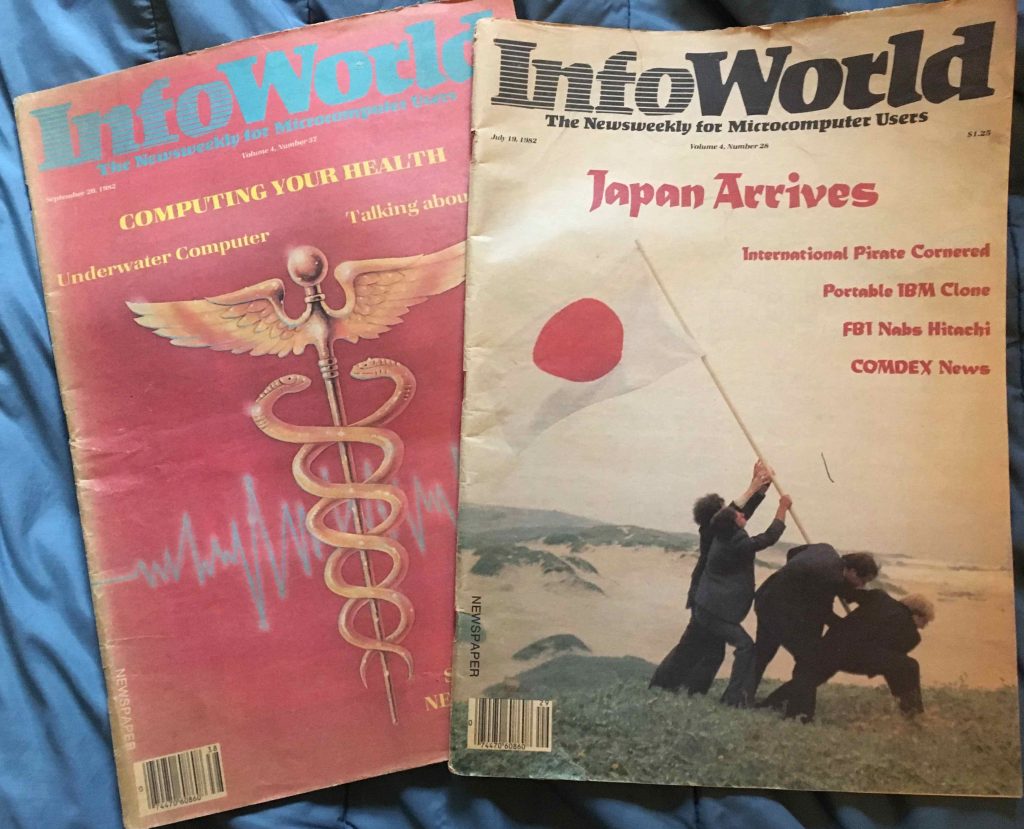

I was reminiscing that more than 30 years ago, on top of my job designing educational computer games, I was already curious. I still have copies of the magazines containing reviews I did (one hardware, one software), as well as a journal article based upon undergraduate research I was fortunate to participate in.

I was reminiscing that more than 30 years ago, on top of my job designing educational computer games, I was already curious. I still have copies of the magazines containing reviews I did (one hardware, one software), as well as a journal article based upon undergraduate research I was fortunate to participate in.

And that persistence in curiosity has led to a trail of artefacts. You may have come across the books, book chapters, articles, presentations, etc. And, of course, this blog for the past decade and more. (May it continue!) However, I’m not here to tout my wares, but instead to point to the benefit of being curious.

As things change faster, a continuing interest is what provides an ongoing ability to adapt. All the news about the ongoing changes in jobs and work isn’t likely to lessen. Staying curious benefits you, your colleagues and friends, and I reckon society in general. You want to look at many sources of information, track tangential fields, and be open to new ideas.

This isn’t just your choice, of course, ideally your organization is supportive. These lateral inputs are a component of innovation, as is time to allow for serendipity and incubation. Orgs that want to be able to be agile will need this capabilities as well. I suppose organizations need to stay curious as well!

Radical Coherency

Tied to my last post about insufficient approaches, I was thinking again about the Coherent Organization . Coherency is powerful, but it could be a limiting metaphor. So I want to explore it a bit further.

First, coherency is powerful. Lasers, for example, are just light, the same as comes from your lightbulbs. Except that the wavelengths are aligned and focused. When they‘re at the same frequency, in the same direction, suddenly you can cut steel!

However, an easy interpretation is that you get this right, and it‘s then sufficient. But that‘s no longer sufficient in organizations. As things change, you need coherency and agility. How do you get both?

I‘m suggesting that coherency has to be on many dimensions. So you have coherency with the organization‘s purpose, but people are coherent with each other, and with the customers, and with best principles. And that latter is important, as best practices won‘t transfer unless they‘re abstracted and recontextualized.

So what I‘m arguing for is a more radical coherency, a coherency that‘s in synchrony in an ecosystem perspective. Where people are communicating and collaborating in ways that apply best principles in an way that integrates them into an aligned whole that‘s greater than the sum of the parts.

This is a learning organization, but one that‘s integrating many disparate elements. That, I think, is a desirable and achievable goal, but it’s more than one program. It’s a campaign that needs an initial focus, and a plan to successfully integrate it into practice first, and then to scale it to both shift practice and culture. It’s non-trivial, but I think it’s more than worthwhile: it’s necessary. What do you think?

Simple Insufficiency

As things get more complex, organizations are looking to get more agile. And they‘re looking at a wide variety of approaches in different areas. It can be agile, digital transformation, design thinking, and more. And, by and large, these are all good things. And all of them are quite simply insufficient. Why do I suggest this insufficiency? Because the solution is complex.

Organizations are complex organisms. If you try to address them with simple solutions, you will perturb them, but the results will not be as expected. Whether you believe the 70% failure rate of org change initiatives, the fact is that many or most organizational change initiatives don‘t achieve the desired outcomes. As we explore this more, we understand that it requires a ‘ground war, not an air war‘ as Sutton & Rao put it in Scaling Up Excellence. And I‘ll posit that there‘s more.

This isn‘t unknown; regardless of label, the folks who are responsible for such initiatives typically argue that that it‘s a process. Yet orgs still look for the simple packaged program that will turn things around. And while it‘s understandable, it‘s decreasingly likely to work. It takes a system approach.

And what I haven‘t seen, and I‘m willing to hear of one, is a comprehensive program that addresses the full suite of skills and culture together that constitute a coherent organization. And that‘s a non-trivial compendium of elements. There are the cultural elements, and skills, and tools, and more. PKM, WoL (SyW), 70:20:10, teaming, collaborating and communicating, etc, are all elements, but they need to be tied together.

My point, I guess, is that there needs to be an entry point, but also a plan to develop the full suite of skills and move the culture. And, like most meta-learning, it needs to be done around something. So you need a concrete focus to start, some problem you‘re working on that you‘ll do in the new way, and practice the processes and develop the competencies and culture as you go. For the org, it should be a necessary new extension to the organization‘s competencies. For L&D, it should be first applied for some L&D project.

In both cases it needs a plan and support for acquisition. And include a realistic time frame for starting, and then spreading. It‘s not simple, but it‘s necessary. Anything else, I fear, is truly insufficient.

Mundanities

This post is late, as my life has been a little less reflective, and a little more filled with some mundane issues. There’re some changes here around the Quinnstitute, and they take bandwidth. For a small update on these mundanities with some lessons:

First, I moved office from the side of the house back to the front. My son had occupied it, but he’s settled into an apartment for college, and I prefer the view out to the street (to keep an eye on the neighborhood). Of course, this entailed some changes:

First, I moved office from the side of the house back to the front. My son had occupied it, but he’s settled into an apartment for college, and I prefer the view out to the street (to keep an eye on the neighborhood). Of course, this entailed some changes:

My ergonomic chair stopped working, and it took several days to a) find out someone who’d repair it, b) get it there, wait for it to get fixed, and get it back. It’s worth it (a lot less than replacing) and ergonomics is important.

Speaking of which, I also now could get a standup desk, or in my case one of those convertible desks that lets you raise and lower your workspace. I’ve been wanting one since the research has come out on the problems with sitting. We’d previously constructed a custom desktop (with legs from Ikea!), for the odd shaped room, so it was desirable to just put it on top. So far, so good. Strongly recommended.

Also bought a used bookshelf (rather than move the one from the old office). Real wood, real heavy. Used those ‘forearm forklift’ straps to get it in. They work! And, this being earthquake country, had to strap it to the wall. Still to come: filling with books.

At the same time, fed up with all the companies that provide internet and cable television, we decided to change. (We changed mobile providers back in January.) As I noted previously, companies use policies to their advantage. One of the approaches is that they sell you a two year package, but then there’s no notification that the time’s up and the rate jumps up. And you can’t find just a low rate provider (I don’t even mind if it’s higher than the bonus deal). Everyone uses this practice. Sigh.

As I said, I can’t find anyone better, but just decided to change. That involved conversations, and research, and installation time, and turning off the old systems. At least we’re getting a) a lower rate, b) nicer DVR, and c) faster internet. For the time being. While the new provider promised to ping me before the plan runs out, the old provider says they can’t. See what I mean? Regardless, I’ve got a trigger before it expires to sign up anew. Or change again. That’s the lesson on this one.

And of course there are some conversations about some upcoming presentations. I was away last week presenting, and have one coming up next month (ATD China Summit, if you’re near Shanghai say hello) and several in November at AECT in Jacksonville. You’ve seen some of the AI reflections, more likely to come on the new topics.

And there’s been some background work. Reading a couple of books, and working on two projects. Stay tuned for a couple of new things early next year.

The lesson, of course, is trying to find time to reflect while you’re executing on mundanities is more challenging, but still a valuable investment. I fight to make time, I hope you do too!

Organizational terms

Listening to a talk last week led me to ponder the different terms for what it is I lobby for. The goal is to make organizations accomplish their goals, and to continue to be able to do so. In the course of my inquiry, I explored and uncovered several different ‘organizational’ terms. I thought I should lay them out here for my (and your) thoughts.

For one, it seemed to be about organizational effectiveness. That is, the goal is to make organizations not just efficient, but capable of optimal levels of performance. When you look at the Wikipedia definition, you find that they’re about “achieving the outcomes the organization intends to produce”. They do this through alignment, increasing tradeoffs, and facilitating capacity building. The definition also discusses improvements in decision making, learning, group work, and tapping into the strictures of self-organizing and adaptive systems, all of which sound right.

Interesting, most of the discussion seems to focus on not-for-profit organizations. While I agree on their importance, and have done considerable work with such organizations, I guess I’d like to see a broader focus. Also, and this is purely my subjective opinion, the newer thoughts seem grafted on, and the core still seems to be about producing good numbers. Any time you use the phrase ‘human capital’, I am leery.

Organizational engineering is a phrase that popped to mind (similar to learning engineering). Here, Wikipedia defines it as an offshoot of org development, with a focus on information processing. And, coming from cognitive psychology, that sounds good, with a caveat. The reality is, we’re flawed as ideal thinkers. And in the definition it also talks about ‘styles’, which are a problem all on their own. Overall, this appears to be more a proprietary suite of approaches under a label. While it uses nice sounding terms, the reality (again, my inferences here) is that it may be designed for an audience that doesn’t exist.

The final candidate is organizational development. Here the definition touts “implementing effective change”. The field is defined as interdisciplinary and drawing on psych, sociology, and more. In addition to systems thinking and and decision-making, there’s an emphasis on organizational learning and on coaching, so it appears more human-focused. The core values also talk about human beings being valued for themselves, not as resources, and looking at the complex picture. Overall this approach resonates with me more, not just philosophically, but pragmatically.

As I look at what’s emerging from the scientific study of people and organizations, as summed up in a variety of books I’ve touted here, there are some very clear lessons. For, one, people respond when you treat the as meaningful parts of a worthwhile endeavor. When you value people’s input and trust them to apply their talents to the goals, things get done. Caring enough to develop them in ways that are supportive, not punitive, and not just your goals but theirs’ too, retains their interest and commitment. And when you provide them with an environment to succeed and improve, you get the best organizational outcomes.

There’s more about how to get started. Small steps, such as working in a small group (*cough* L&D? *cough* ;), and developing the practices and the infrastructure, then spreading, has been shown to be better than a top-down initiative. Experimenting and reviewing the outcomes, and continually tweaking likewise. Ensuring that it’s coaching, not ‘managing’ (managers are the primary reason people leave companies). Etc.

All this shouldn’t be a surprise, but it’s not trivial to do but takes persistence. And, it flies in the face of much of management and HR practices. I don’t really care what we label it, I just want to find a way to talk about things that makes it easy for people to know what I’m talking about. There are goals to achieve, so my main question is how do we get there? Anyone want to get started?