Workflow learning is one of the new buzzphrases. The notion is that you deliver learning to the point of need, instead of taking people away from the workflow. And I’m a fan. But it’s not as easy as it sounds! Context is a critical issue in making this work, and that’s non-trivial.

When we create learning experiences, typically we do (or should) create an artificial context for learners to practice in. And this makes sense when the performance has high consequences. However, if people are in the workflow, there is a context already. Could we leverage that context for learning instead of creating one? When would this make sense?

I’d suggest that there are two times workflow learning makes sense. For one, if the performers aren’t novices, this becomes an opportunity to provide learning at the point of need to elaborate and extend learning. Say, refining knowledge on sales, marketing, or product when touching one of them. For another, it would make sense if the consequences aren’t high and the ease of repair is easy. So, sending on a workpiece that will get checked anyways.

Of course, we could just do performance support, and not worry about the learning, but we can do that and support learning as well. So, having an additional bit of learning content at the right time, whether alone or in conjunction with performance support, is a ‘good thing’. The difficulties come when we get down to specifics.

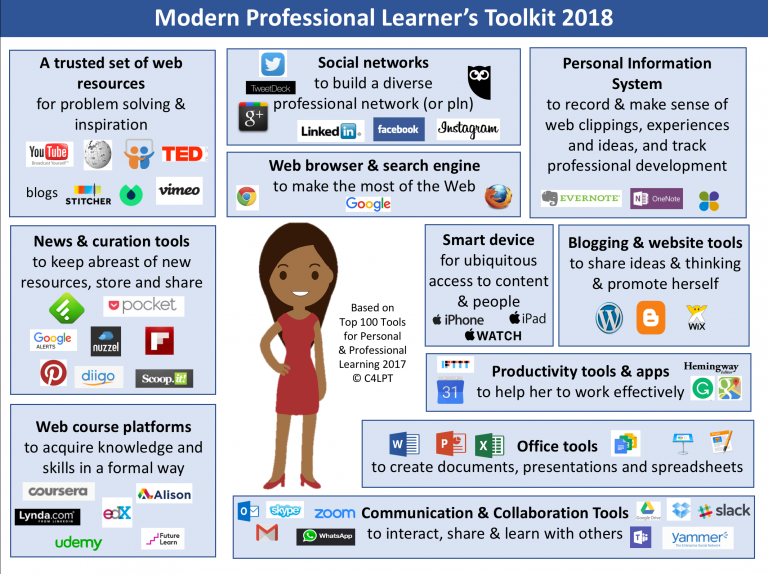

Specifically, how do we match the right content with the task? There are several ways. For one, it can just be pull. Here the individual asks for some additional help and/or learning. This isn’t completely trivial either, because you have to have a search mechanism that makes it easy for the performer to get the right stuff. This means federated search, vocabulary control, and more. Nothing you shouldn’t already be worrying about for pull learning anyways, but for the record.

Second, you could do push. Here it gets more dicey. One way is to have content tied to specific instances. This can be hand done as some tools have made possible. That is, you instrument content with help where you find, or think, it could be needed. The other way is to be smart about the context.

And this is where it gets complicated. For such workflow learning to work, you really want to leverage the context, so you need to be able to identify the context. How do you know what they’re doing? Then you need to map that context to content. You could use some signal (c.f. xAPI) that tells you when someone touches something. Then you could write rules that map that touch to the associated content. It might even by description, not hardwired, so the system’s flexible. For instance, it might change the content depending on how many times and how recently this person has done this task. This is all just good learning engineering, but the details matter.

Making workflow learning work is a move towards a more powerful performance ecosystem and workforce, but it requires some backend effort. Not surprising, but worth being clear on.