As luck would have it, I found out about an event on Storytelling Across Media being run in the city, and attended a couple of the panels: half of one on interactive design and Telltale Games, one on story and games, and one on story and VR. There were interesting quotes from each about story, games, and VR that prompted reflection, and I thought I’d share my thoughts with you.

As luck would have it, I found out about an event on Storytelling Across Media being run in the city, and attended a couple of the panels: half of one on interactive design and Telltale Games, one on story and games, and one on story and VR. There were interesting quotes from each about story, games, and VR that prompted reflection, and I thought I’d share my thoughts with you.

Story and Games

The first quote that struck home was “nonlinear storytelling strikes a balance between narrative and choice”. This is the challenge that I and I think all game designers struggle with. So, I subsequently asked “How do you integrate storytelling with experience design?” The panelists acknowledged that this was the ongoing challenge. Another comment was that “stories are created in your imagination”. That’s key, I think, to create experiences that the player will end up writing as a story they can tell.

I found myself thinking about story machines versus experience engines. It appears to me that, ala Sid Maier’s “a good game is a series of interesting decisions”, that it’s all about the decisions you make. It’s easier to tell a good story when you put a game ‘on rails’; it’s harder when you want to have an open world and still ramp up the tension across the board. Having rules and timers give you the opportunity. For serious games, however, not commercial ones, I reckon it’s more ok for the story to be somewhat linear.

Another interesting comment was about how things are going transmedia. An issue that emerged was the business of transmedia, how you might start with a comic to build interest and revenue to fund adding in a game, or a movie. Telling stories across media is an interesting challenge, and could have real opportunity for learning. I have been a fan of Andrea Phillips Transmedia Storytelling and Koreen Pagano’s Immersive Learning, which I think give good clues about how this might go. I’m also thinking about the movie The Game (Michael Douglas & Sean Penn), and how it’s a great example of an alternate reality game. I’d love to do something like that, but serious. We did a demo once about sales that captured some of the opportunity, but…

Also, I’ve looked at many instances of experience design: movies, theatre, amusement parks, games, etc. And I’ve advocated that those interested in making experience engaging, particularly learning, should similarly explore this. It’s hard work, you know ;). However, one of the panelists commented on ‘circus design’. That’s something I had never thought to explore, so it’s now on my ‘todo’ list!

There were also several mentions of a theatre experience in New York called Sleep No More. It involves two intersecting stories: Macbeth and a lady looking for someone. There’s no dialog, and it plays out across several venues. The interesting thing is that you, as an audience member, choose where to go, who to follow, and what to watch. Now I need to find a way to experience this! (Wish I’d heard about it before my keynote there in June.)

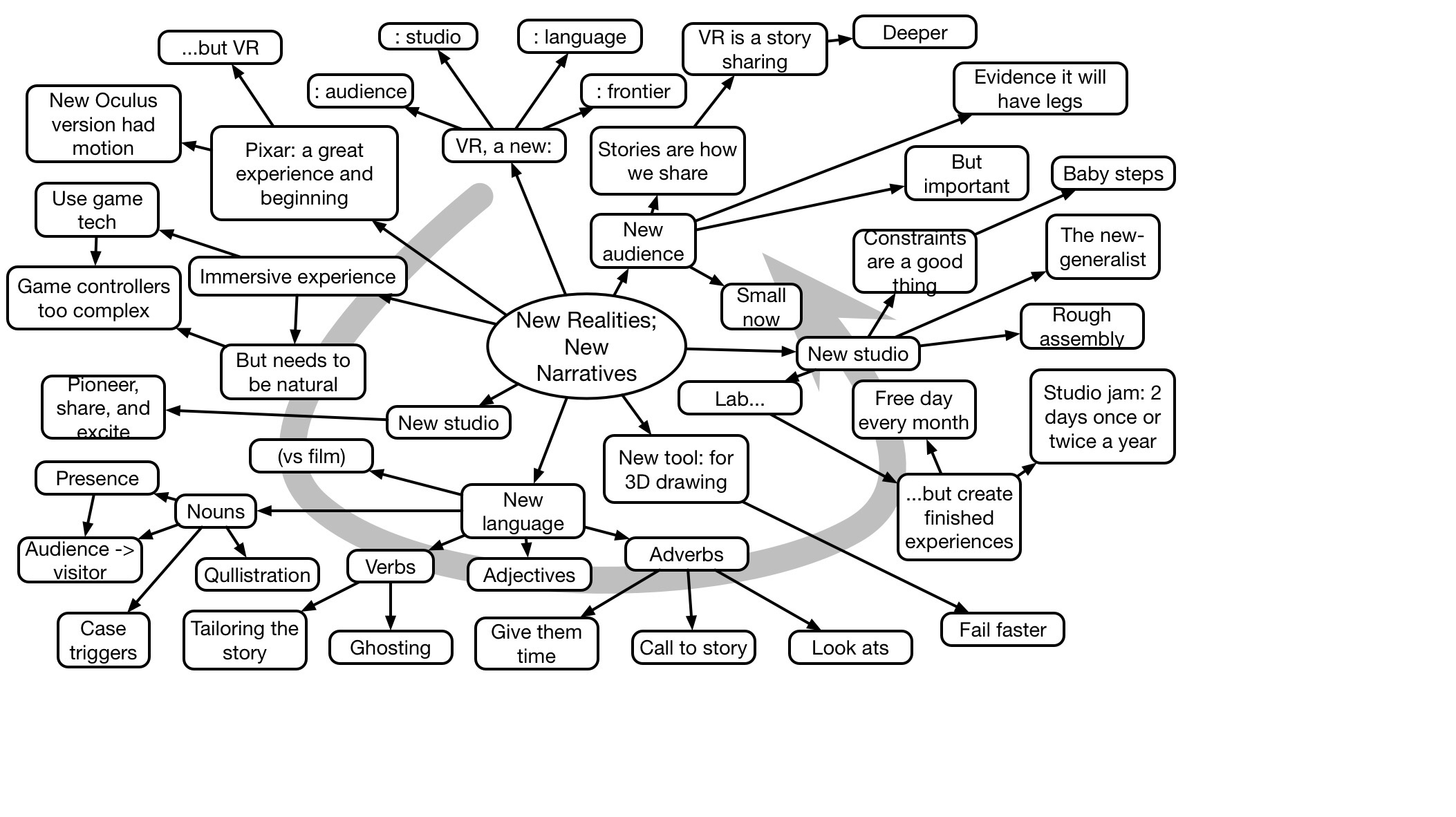

VR

The other theme was VR, and there were some very interesting comments made. It was repeatedly made clear by the practitioners that this was a field still very much in development. The tools and technologies had become good and cheap enough to allow tinkering and exploration, but the business models and viable experiences were still being explored.

One quote that was interesting was a response to the issue of what the ‘frame is’. In computer games, the frame is the screen. But in VR there’s no ‘screen’, you’re surrounded. A response to this was “the player is the ‘frame’ in VR”. That’s an interesting perspective. I might reframe it as “the player’s attention is the ‘frame’ in the game”, and manipulating that may be the key. To ponder.

Another interesting comment was “proximity breeds empathy”. I was reminded of the phrase “familiarity breeds contempt”, but I can see that an experiential approach may help generate sympathy and comprehension. Can you actually share someone else’s experience? Certainly, immersion has yielded concrete learning improvements, and successful behavioral interventions.

Which brings up a response on the question of where the future of VR is (that seemed to be reflected by the other panelists) is that shared VR is the future. Clearly, social has big benefits for learning, and can be the basis of strong emotions (sometimes negative!)

There are clearly times when VR has unique and valuable advantages for learning, though I continue to think that AR may provide the greater overall opportunity, when it’s done right. It might be like the difference between courses and mentoring. That is, VR to make a step change, and the AR for continual development. Where do ARGs fit in? Perhaps more for developing the ability to deal with the unexpected?

One of the panelists mentioned Magic Leap, and I was reminded that that type of experience will be where we can really get opportunities for transformative experiences. I think that’s where Google Glass was going, and they’re right to hold off and get it right, but when we can really start annotating the world, combining it with ARGs, there will be real potential. We can start designing now, but it’ll definitely be some time before tools and technologies hit the ‘experimentation’ phase VR has reached.

Lots of fodder for thinking!