In a document shared with me recently, there was this statement: “The assumption that there will always be a managed learning function”. I find that interesting to contemplate. If we ever get better about developing self-learning skills in school or university (ideally the former), could we eliminate the need for organizational courses? E.g. will we still need L&D?

The notion is that once folks are better at self-learning, the reason for organized courses could fade. If schools start developing learn-to-learn skills, wouldn’t everyone be able to take responsibility for their own learning? Alternatively, could the role of L&D ramp down?

David Geary has been identified as a proponent of a distinction between evolutionarily different levels of learning. The idea as I comprehend it is that we’ve evolved to learn certain types of things. The flip side is those don’t include man-made constructs like mathematics, economics, and such. Thus, our learning to learn first has to develop abilities in these new domains. But that could happen.

And then there’s the notion of bootstrapping in a new domain. We start as novices in new domains, and those may be some organizational proprietary material. The domain’s likely built upon some predecessor concepts that may be familiar, but can a motivated and self-effective learner get this in a reasonable amount of time, or will they benefit from a learning experience?

And then there’s the notion of bootstrapping in a new domain. We start as novices in new domains, and those may be some organizational proprietary material. The domain’s likely built upon some predecessor concepts that may be familiar, but can a motivated and self-effective learner get this in a reasonable amount of time, or will they benefit from a learning experience?

If, of course, we extend L&D to support informal learning (and I suggest we should), there’s another opportunity. Until schools also develop effective communication and collaboration skills, L&D would be useful. There’s the further issue of creating a learning culture, too, where people share and cooperate. The predisposition could and should again be developed in schools, but until then…

And one final opportunity is facilitating communities of practice to become responsible for development paths, resource curation and creation, and documenting and developing ongoing domain expertise. There’s the facilitation role here for L&D until that time, but it could become part and parcel of community practice.

So, conceivably, there’s a future without L&D. That is, individuals, teams, and communities are effective self-learners. That day, I fear, is a long way off. Moving in that direction isn’t a bad move for L&D, because worries about performing oneself out of existence are premature. Schools haven’t been effective in uptake of learning science, and pressures have reduced the curricula to a limited (and misguided) core. Until then, asking “will we still need L&D” is a far-fetched question.

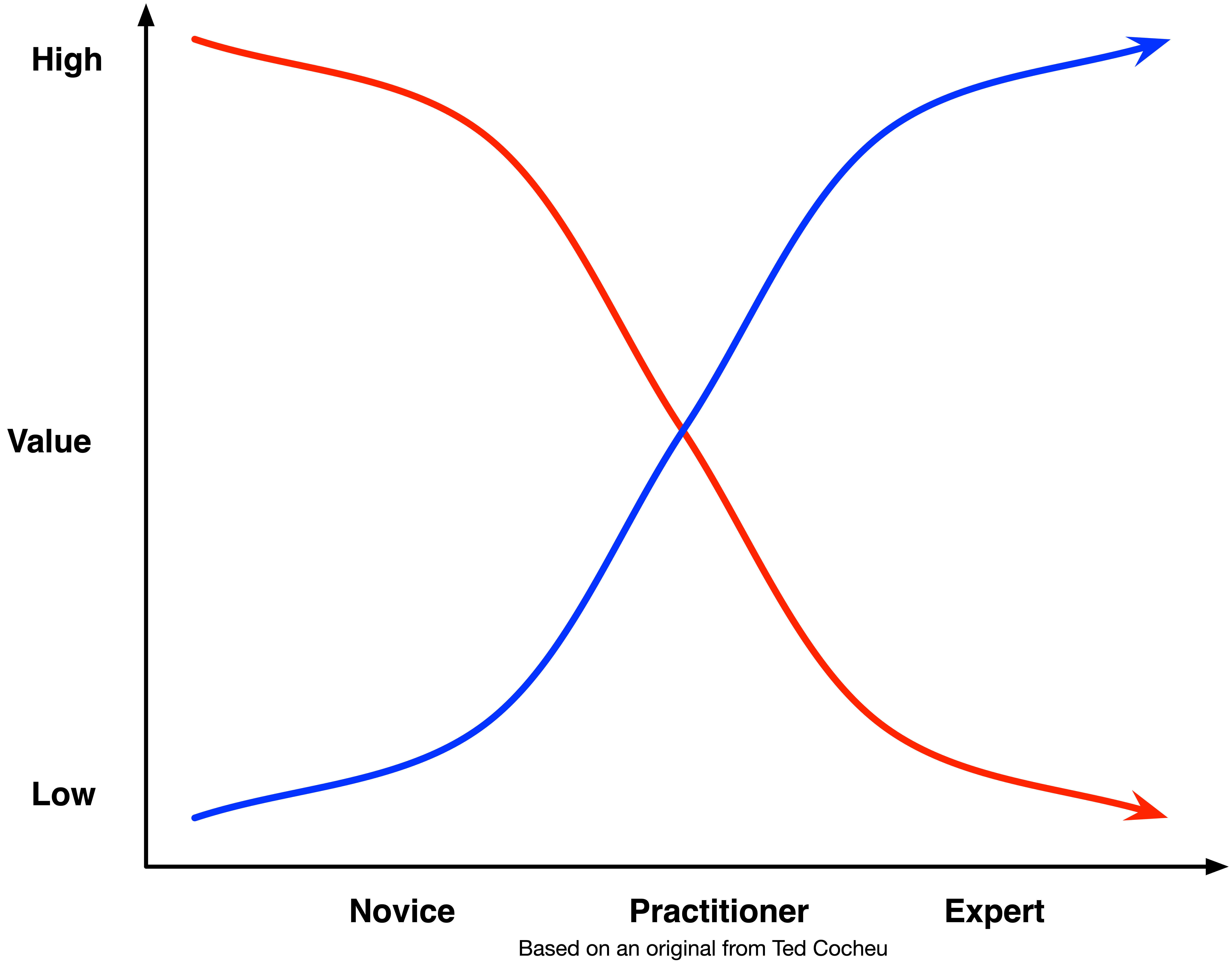

So I think the demise of L&D is up to L&D. What I mean is that L&D can be just about (ineffective) courses, or it can move into a more valuable position to the organization. And, if we’re clever, we’ll have found our own continuing value proposition to the org before the demise of our existing role.

Ultimately, I believe that a unit in the organization responsible for maintaining alignment with how we think, work, and learn will always have a role. We just have to put ourselves in that position. Viva la revolution!