Of late, I‘ve been reading quite a lot, and I‘m finding some very interesting books. Not all have immediate take homes, but I want to introduce a few to you with some notes. Not all will be relevant, but all are interesting and even important. I‘ll also update my list of recommended readings. So here are my new recommended readings. (With Amazon Associates links: support your friendly neighborhood consultants.)

Of late, I‘ve been reading quite a lot, and I‘m finding some very interesting books. Not all have immediate take homes, but I want to introduce a few to you with some notes. Not all will be relevant, but all are interesting and even important. I‘ll also update my list of recommended readings. So here are my new recommended readings. (With Amazon Associates links: support your friendly neighborhood consultants.)

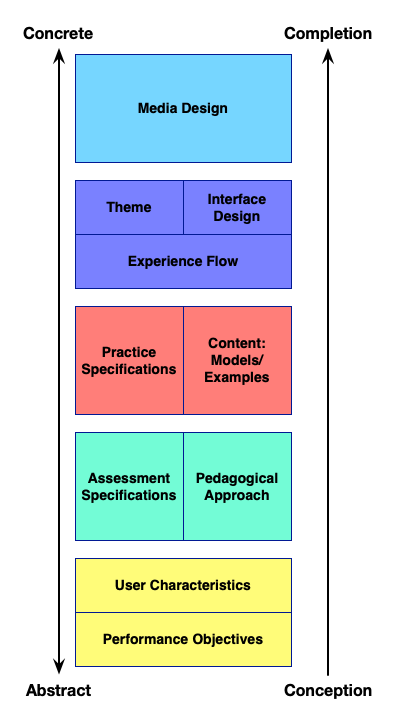

First, of course, I have to point out my own Learning Science for Instructional Designers. A self-serving pitch confounded with an overload of self-importance? Let me explain. I am perhaps overly confident that it does what it says, but others have said nice things. I really did design it to be the absolute minimum reading that you need to have a scrutable foundation for your choices. Whether it succeeds is an open question, so check out some of what others are saying. As to self-serving, unless you write an absolute mass best-seller, the money you make off books is trivial. In my experience, you make more money giving it away to potential clients as a better business card than you do on sales. The typically few hundred dollars I get a year for each book aren‘t going to solve my financial woes! Instead, it‘s just part of my campaign to improve our practices.

So, the first book I want to recommend is Annie Murphy Paul‘s The Extended Mind. She writes about new facets of cognition that open up a whole area for our understanding. Written by a journalist, it is compelling reading. Backed in science, it’s valuable as well. In the areas I know and have talked about, e.g. emergent and distributed cognition, she gets it right, which leads me to believe the rest is similarly spot on. (Also her previous track record; I mind-mapped her talk on learning myths at a Learning Solutions conference). Well-illustrated with examples and research, she covers embodied cognition, situated cognition, and socially distributed cognition, all important. Moreover, there‘re solid implications for the redesign of instruction. I‘ll be writing a full review later, but here‘s an initial recommendation on an important and interesting read.

I‘ll also alert you to Tania Luna‘s and LeeAnn Renninger‘s Surprise. This is an interesting and fun book that instead of focusing on learning effectiveness, looks at the engagement side. As their subtitle suggests, it‘s about how to Embrace the Unpredictable and Engineer the Unexpected. While the first bit of that is useful personally, it‘s the latter that provides lots of guidance about how to take our learning from events to experiences. Using solid research on what makes experiences memorable (hint: surprise!) and illustrative anecdotes, they point out systematic steps that can be used to improve outcomes. It‘s going to affect my Make It Meaningful work!

Then, without too many direct implications, but intrinsically interesting is Lisa Feldman Barrett‘s How Emotions Are Made. Recommended to me, this book is more for the cog sci groupie, but it does a couple of interesting things. First, it creates a more detailed yet still accessible explanation of the implications of Karl Friston‘s Free Energy Theory. Barrett talks about how those predictions are working constantly and at many levels in a way that provides some insights. Second, she then uses that framework to debunk the existing models of emotions. The experiments with people recognizing facial expressions of emotion get explained in a way that makes clear that emotions are not the fundamental elements we think they are. Instead, emotions social constructs! Which undermines, BTW, all the facial recognition of emotion work.

I also was pointed to Tim Harford‘s The Data Detective, and I do think it‘s a well done work about how to interpret statistical claims. It didn‘t grip me quite as viscerally as the afore-mentioned books, but I think that‘s because I (over-)trust my background in data and statistics. It is a really well done read about some simple but useful rules for how to be a more careful reviewer of statistical claims. While focused on parsing the broader picture of societal claims (and social media hype), it is relevant to evaluating learning science as well.

I hope you find my new recommended readings of interest and value. Now, what are you recommending to me? (He says, with great trepidation. ;)