Changing behavior is hard. The brain is arguably the most complex thing in the known universe. Simplistic approaches aren‘t likely to work. To rewire it, one approach is to try surgery. This is problematic for a several reasons: it‘s dangerous, it‘s messy, and we really don’t understand enough about it. What‘s a person to do?

Well, we do know that the brain can rewire itself, if we do it right. This is called learning. And if we design learning, e.g. instruction, we can potentially change the brain without surgery. However, (and yes, this is my point) treating it as anything less than brain surgery (or rocket science), isn‘t doing justice to what‘s known and what‘s to be done.

The number of ways to get it wrong is long. Information dump instead of skills practice. Massed practice instead of spaced. Rote knowledge assessment. Lack of emotional engagement. The list goes on. (Cue the Serious eLearning Manifesto.) In short, if you don‘t know what you‘re doing, you‘re likely doing it wrong and are not going to have an effect. Sure, you‘re not likely to kill anyone (unless you‘re doing this where it matters), but you‘ll waste money and time. Scandalous.

Again, the brain is complex, and consequently so is learning design. So why, in the name of sense and money, do we treat it as trivial? Why would anyone buy a story that we can achieve anything meaningful by taking content and adding a quiz (read: rapid eLearning)? As if a quiz is somehow going to make people do better. Who would believe that just anyone can present material and learning will occur? (Do you know the circumstances when that will work?) And really, throwing fuzzy objects around the room and ice-breakers will somehow make a difference? Please. If you can afford to throw money down the drain (ok, if you insist, throw it here ;), and don‘t care if any meaningful change happens, I pity you, but I can‘t condone it.

Let‘s get real. Let‘s be honest. There‘s a lot (a lot) of things being done in the name of learning that are just nonsensical. I could laugh, if I didn‘t care so much. But I care about learning. And we know what leads to learning. It‘s not easy. It‘s not even cheap. But it will work. It requires good analysis, and some creativity, and attention to detail, and even some testing and refinement, but we know how to do this.

So let‘s stop pretending. Let‘s stop paying lip-service. Let‘s treat learning design as the true blend of art and science that it is. It‘s not the last refuge of the untalented, it‘s one of the most challenging, and rewarding, things a person can do. When it‘s done right. So let‘s do it right! We‘re performing brain surgery, non-invasively, and we should be willing to do the hard yards to actually achieve success, and then reap the accolades.

OK, that‘s my rant, trying to stop what‘s being perpetrated and provide frameworks that might help change the game. What‘s your take?

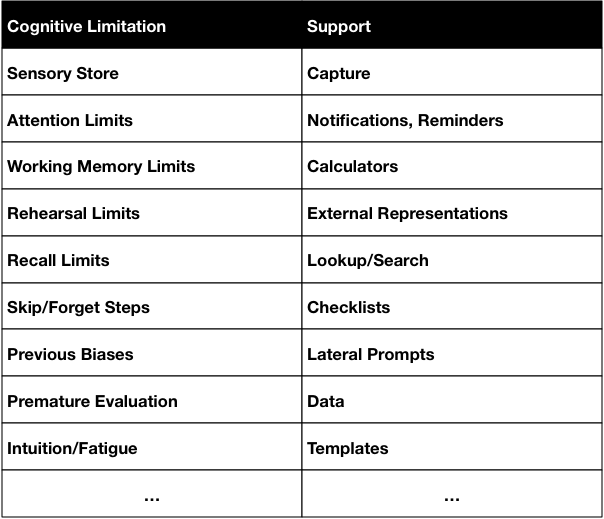

So, for instance, our senses capture incoming signals in a sensory store. Which has interesting properties that it has almost an unlimited capacity, but for only a very short time. And there is no way all of it can get into our working memory, so what happens is that what we attend to is what we have access to. So we can’t recall what we perceive accurately. However, technology (camera, microphone, sensors) can recall it all perfectly. So making capture capabilities available is a powerful support.

So, for instance, our senses capture incoming signals in a sensory store. Which has interesting properties that it has almost an unlimited capacity, but for only a very short time. And there is no way all of it can get into our working memory, so what happens is that what we attend to is what we have access to. So we can’t recall what we perceive accurately. However, technology (camera, microphone, sensors) can recall it all perfectly. So making capture capabilities available is a powerful support.

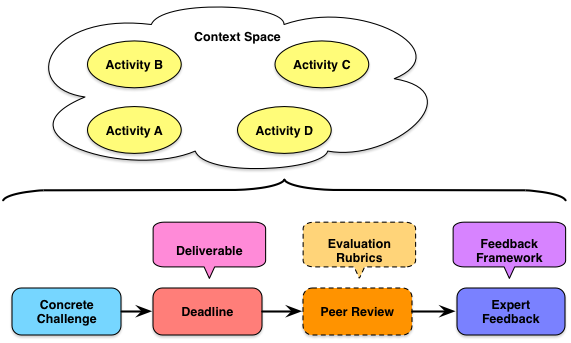

The empirical data is that we learn better when our learning practice is contextualized. And if we want transfer, we should have practice in a spread of contexts that will facilitate abstraction and application to all appropriate settings, not just the ones seen in the learning experience. If the space between our learning applications is too narrow, so too will our transfer be. So our

The empirical data is that we learn better when our learning practice is contextualized. And if we want transfer, we should have practice in a spread of contexts that will facilitate abstraction and application to all appropriate settings, not just the ones seen in the learning experience. If the space between our learning applications is too narrow, so too will our transfer be. So our