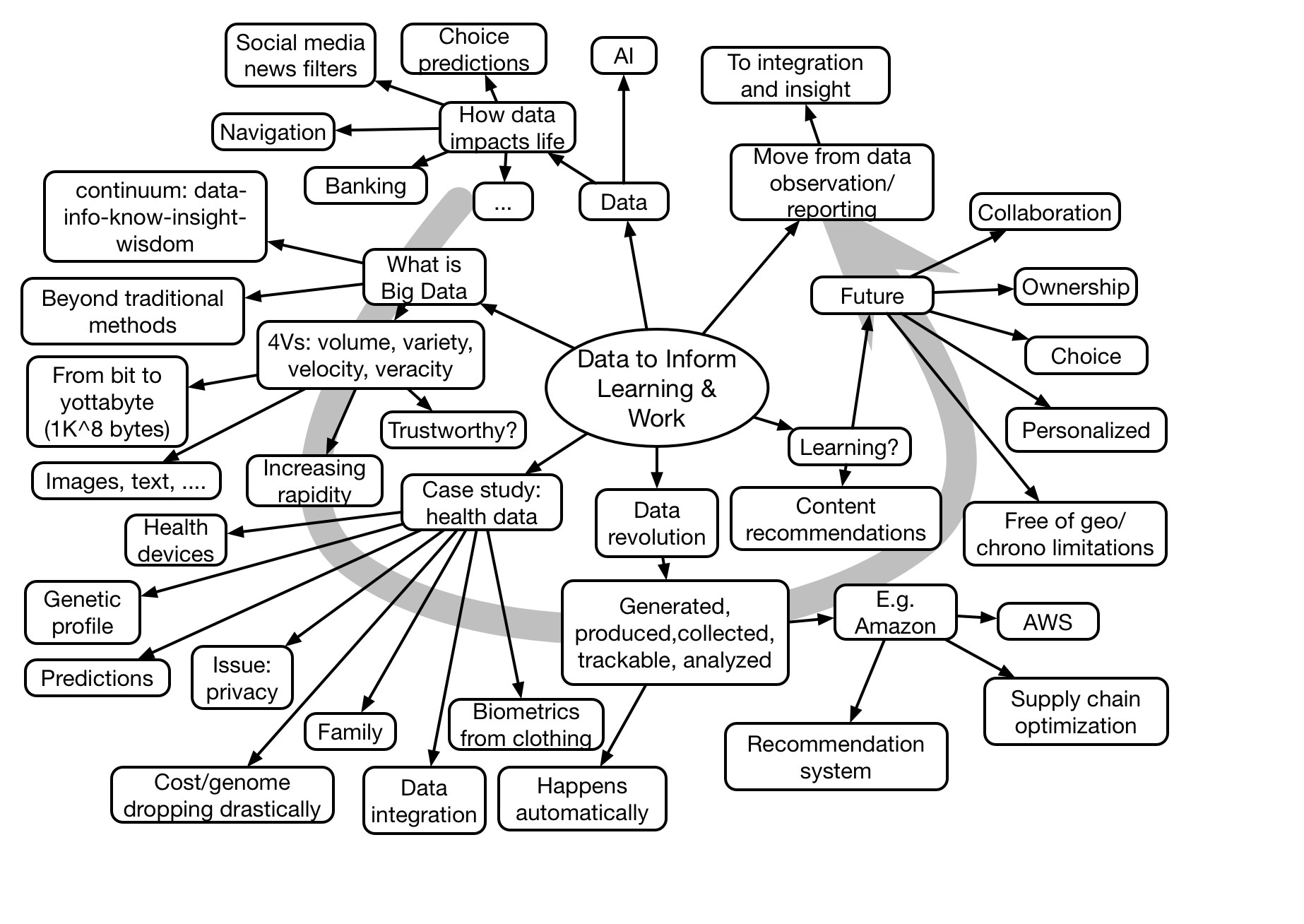

Talithia Williams presented the afternoon keynote on the opening day of DevLearn. She gave an overview of the possibilities of data, and the basics of data science. She then made some inferences to learning.

Clark Quinn’s Learnings about Learning

Talithia Williams presented the afternoon keynote on the opening day of DevLearn. She gave an overview of the possibilities of data, and the basics of data science. She then made some inferences to learning.

I was recently asked to endorse two totally separate things. And it made me reflect on just what my principles for such an action might be. So here’s an gentrified version of my first thoughts on my principles for endorsements:

First, my reputation is based on rigor in thought, and integrity in action. Thus, anyone I‘d endorse both has to be scrutable both in quality of design and in effectiveness in execution.

So, to establish those, I need to do several things.

For one, I have to investigate the product. Not just the top-level concept, but the lower-level details. And this means not only exploring, but devising and performing certain tests.

And that also means investigating the talent behind the design. Who‘s responsible for things like the science behind it and the ultimate design.

In addition, I expect to see rigor in implementation. What‘s the development process? What platform and what approach to development is being used? How is quality maintained? Maintainability? Reliability? I‘d want to talk to the appropriate person.

And I‘d want to know about customer service. What‘s the customer experience? What‘s the commitment?

There‘ve been a couple of orgs that I worked with over a number of years, and I got to know these things about them (and I largely played the learning science role ;), so I could recommend them (tho‘ they didn‘t ask for public endorsements) and help sell them in engagements. And I was honest about the limitations as well.

I have a reputation to maintain, and that means I won‘t endorse ‘average‘. I will endorse, but it‘s got to be scrutable at all levels and exceptional in some way so that I feel I‘m showing something unique and exceptional but will also play out favorably over time. If I recommend it, I need people to be glad if they took my advice. And then there’s got to be some recompense for my contribution to success.

One thing I hadn‘t thought of on the call was a possibility of limited or levels of endorsement. E.g. “This product offers a seemingly unique solution that is valuable in conceptâ€, but not saying “I can happily recommend this approachâ€. Though the value of that is questionable, I reckon.

Am I overreaching in what I expect for endorsements, or does this make sense?

I was talking with my better half, who’s now working at a nursery. Over time, she has related stories of folks coming to ask for assistance. And the variety is both interesting and instructive. There’s a vast difference of how people can be working with you.

So, for one, she likes to tell stories of people who come in saying “you know, I want something ‘green'”. Or, worse, “I want a big tree that doesn’t require any watering at all”. (Er, doesn’t exist.) The one she told me today was this lady who came in wanting “you know, it’s white and grows like <hand gesture showing curving over like a willow>”. So m’lady showed her a plant fitting the description. But “no, it’s not got white flowers”. It ended up being a milkweed, which isn’t white and stands straight up!

What prompted this reflection was the situation she cited of this other customer. He comes in with a video of the particular section he wants to work on this time, with measurements, and a brief idea of what he’s thinking. Now this is a customer that’s easy to help; you can see the amount of shade, know the size, and have an idea of what the goal is.

I related this (of course ;), to L&D. What you’d like is the person who comes and says “I have this problem: performance should be <desired measurement> but instead it’s only <current measurement>. What steps can we take to see if you can help?” Of course, that’s rare. Instead you get “I need a course on X.” At least, until you start changing the game.

JD Dillon tweeted “…But in real life they can’t just say NO to the people who run the organization. ‘Yes, and …’ is a better way to get people to start thinking differently.” And that’s apt. If you’ve always said “yes”, it’s really not acceptable to suddenly start saying “no”. Saying “Yes and…” is a nice way to respond. Something like “Sure, so what’s the problem you’re hoping this course will solve?”

And, of course, you should be this person too. “Let me tell you why I’d like to buy a VR headset,” and go on to explain how this critical performance piece is spatial and visceral and you want to experiment to address it. Or whatever. Come at it from their perspective, and you have a better chance, I reckon.

You won’t always get the nice customers, but if you take time and work them through the necessary steps at first, maybe you can change them to be working with you. That’s better than working for them, or fighting with them, no?

In the continuing process of resolving what I want to do when I grow up (rest assured, not happening), I’ve been toying with a concept. And I’ve come up with the phrase: Learning Experience Design (LXD) Strategist. Which of course, begs the question of just what LXD strategy is. So here’s my thinking.

To me, LXD is about the successful integration of learning science and engagement. Yes, cognitive science studies both learning and engagement, but in my experience the two aren’t integrated specifically well. You either get something flashy but empty, or something worthwhile but dreary dull. I remember a particular company that produced rigorous learning that you’d rather tear your eyes out than actually consume. And, similarly, seeing an award winning product that was flashy, but underneath was just drill and kill. For something that shouldn’t be.

Learning experiences should emotionally hook you (e.g. ensuring you know that you need it, and that you don’t know it). Then it should take the necessary steps such as sufficient spaced meaningful practice resourced with appropriate models and examples and specifically feedback. Ultimately, it should transform the learner. Learners go from not having a clue to having a basic ability to do and how to continue to develop.

What is LXD strategy? Here I’m thinking about helping orgs restructure their design processes, and their org structure, to support delivering learning experience designs. This includes ensuring up front that this really does deserve learning instead of some other intervention, such as performance support. Then it includes how you work with SMEs, how you discern key decisions, wrap practice into contexts, etc. It’s also about using the tools – media and technology – to create a well-integrated experience. Note that the integration can include classrooms, ambient content and interactivity, and more. It’s about getting the design right, then implementing.

LXD strategy is about ensuring that resources and practices are aligned to create experiences that meet real org needs under pragmatic constraints. That’s what I’ve been doing in much of my work, and where my interests lead me as well. And it’s still a part of the performance ecosystem. Understanding that relationship is critical, when you start thinking about moving individuals from novices, through practitioners, to expertise. And the numbers of areas that will need this are going to increase.

LXD is, in my mind, the way we should be thinking about ID is now as LXD. And we need to not only think about what it is, and how to do it, but also how we organize to get it done. That, I think, is an important and worthwhile endeavor. So, what’s your thinking?

Instructional design, as is well documented, has it roots in meeting the needs for training in WWII. User experience (UX) came from the Human Computer Interaction (HCI) revolution towards User Centered Design. With a vibrant cross-fertilization of ideas, it’s natural that evolutions in one can influence the other (or not). It’s worth thinking about the trajectories and the intersections that are the roots of LXD, Learning eXperience Design.

I came from a background of computer science and education. In the job for doing the computer support for the office doing the tutoring I had also engaged in, I saw the possibilities of the intersection. Eager to continue my education, I avidly explored learning and instruction, technology (particularly AI), and design. And the relationships, as well.

Starting with HCI (aka Usability), the lab I was in for grad school was leading the charge. The book User-Centered System Design was being pulled together as a collection of articles from the visitors who came and gave seminars, and an emergent view was coming. The approaches pulled from a variety of disciplines such as architecture and theater, and focused on elements including participatory design, situated design, and iterative design. All items that now are incorporated in design thinking.

At that time, instructional design was going through some transitions. Charles Reigeluth was pulling together theories in the infamous ‘green book’ Instructional Design Theories and Models. David Merrill was switching from Component Display Theory to ID2. And there was a transition from behavioral to cognitive ID.

This was a dynamic time, though there wasn’t as much cross-talk as would’ve made sense. Frankly, I did a lot of my presentations at EdTech conferences on implications from HCI for ID approaches. HCI was going broad in exploring a variety of fields to tap in popular media (a lot was sparked by the excitement around Pinball Construction Set), and not necessarily finding anything unique in instructional design. And EdTech was playing with trying to map ID approaches to technology environments that were in rapid flux.

These days, LXD has emerged. As an outgrowth of the HCI field, UX emerged with a separate society being created. The principles of UX, as cited above, became of interest to the learning design community. Explorations of efforts from related fields – agile, design thinking, etc, – made the notion of going beyond instructional design appealing.

Thus, thinking about the roots of LXD, it has a place, and is a useful label. It moves thinking away from ‘instruction’ (which I fear makes it all to easy to focus on content presentation). And it brings in the emotional side. Further, I think it also enables thinking about the extended experience, not just ‘the course’. So I’m still a fan of Learning Experience Design (and now think of myself as an LXD strategist, considering platforms and policies to enable desirable outcomes).

—

As a side note, Customer Experience is a similarly new phenomena, that apparently arose on it’s own. And it’s been growing, from a start in post-purchase experience, through Net Promoter Scores and Customer Relationship Management. And it’s a good thing, now including everything from the initial contact to post-purchase satisfaction and everything in between. Further, people are recognizing that a good Employee Experience is a valuable contributor to the ability to deliver Customer Experience. I’m all for that.

Every once in a while, I have had enough of some things, and want to point them out. I do so not just to complain, but to talk about good principles that have implications beyond just the particular situation. So, here I go with a little whinging.

Of late, when I call in for assistance, the phone system automatically asks me to verify some information. It can be an account number, or just to confirm some data like my house number. This is all good up until the point when I get connected to a live person, and they then ask me for that same data. Many times, as it’s escalated (“yes, it’s plugged in” and “yes, I’ve already tried rebooting it”), I get passed on to another person. And get asked for the same data again.

When pushed, “it’s our systems”. And that’s not good enough. What’s the lesson? You need your systems synched together. The employees need a performance ecosystem that’s integrated, if you’re going to be able to deliver a good customer experience. Reminded of the fact that Dominos is spending more money fighting to not have to be accessible than the estimate to actually make their system accessible!?!

This plays out in another way. So I’m having internet troubles. It’s intermittent (admittedly, that make it hard to diagnose), and it’s not disconnecting, it’s just slowing way down, and then going back to blazing fast.But it’s creating hiccups for my conference calls and webinars. I’m paying a pretty penny for this.

So, they do some remote stuff to the modem and say call back if it’s not better. And it’s not. So they send a tech. Who says it’s in the network, not the local connections and other techs will work on it, and I don’t have to be present, and they work 24/7 and it should be fixed in a couple of days. And then, I get a call which I return and am told it’ll be fixed by late this morning. And then it’s not. So I call again, and first, the person doesn’t seem to have access to the previous notes (which I’d made a point of), and asks me a bunch of questions. Which I’ve already answered previously in the same call. Then, they arrange to send a tech out! Isn’t that the definition of insanity, trying the same thing and expecting a different outcome?

The problem here is the lack of coordination between the different elements. The latest phone person said that they had the notes from the previous tech, and that this one has different skills, but the previous person had told a different story. It’s that that concerns me; the lack of consistency shatters my already-fragile confidence in them. They should have a good linked record (the ecosystem again), but be able to address obvious mismatches elegantly.

Ok, so this one’s less obvious, but it’s relevant. Here’s my claim: I want products that aren’t just dishwasher-safe, I want them dishwasher-smart! What am I talking about? Look at these two glasses. It may be hard to see, but the one on the left has a three-lobed groove in the bottom. While there’s sufficient surface to stand steadily, it also drains. The one on the right, however, has a concavity in the bottom. So, when it goes in the dishwasher (or the dish drainer for that matter), water pools and it doesn’t dry efficiently. WHY?

Ok, so this one’s less obvious, but it’s relevant. Here’s my claim: I want products that aren’t just dishwasher-safe, I want them dishwasher-smart! What am I talking about? Look at these two glasses. It may be hard to see, but the one on the left has a three-lobed groove in the bottom. While there’s sufficient surface to stand steadily, it also drains. The one on the right, however, has a concavity in the bottom. So, when it goes in the dishwasher (or the dish drainer for that matter), water pools and it doesn’t dry efficiently. WHY?

Look, you should be designing products so the affordances (yeah, I said the ‘a’ word ;) work for consumers. I like my backup battery (thanks Nick and SealWorks) because it has a built-in cable! You don’t have to carry a separate one. This goes for learning experiences as well; make the desired behaviors obvious. Leave the challenges to the deliberate ones discriminating appropriate decisions from misconceived ones. And authoring tools should make it easy to do good pedagogy and difficult to do info dump and knowledge test! Ahem.

At core it’s about aligning product and service design with how we think, work, and learn. It should be in the products we purchase, and in the products we use. Heck, I can help if you want assistance in figuring this out, and baking it into your workflows. (I used to teach interface design, having had a Ph.D. advisor who is a guru thereof.). Do read Don Norman’s The Design of Everyday Things if you’re curious about any of this. It’s one of those rare books that will truly change the way you look at the world. For the better.

Design, whether instructional or industrial or interface or anything else that touches people needs to understand those people. Please ensure you do, and then use your powers for good.

There’s a lot of call for evidence-based methods (as mentioned yesterday): L&D, learning design, and more. And this is a good thing. But…do you want to be basing your steps on a particular empirical study, or the framework within which that study emerged? Let me make the case for one approach. My answer to theory or research is theory. Here’s why.

Most research experiments are done in the context of a theoretical framework. For instance, the work on worked examples comes from John Sweller’s Cognitive Load theory. Ann Brown & Ann-Marie Palincsar’s experiments on reading were framed within Reciprocal Teaching, etc. Theory generates experiments which refine theory.

The individual experiments illuminate aspects of the broader perspective. Researchers tend to run experiments driven by a theory. The theory leads to a hypothesis, and then that hypothesis is testable. There are some exploratory studies done, but typically a theoretical explanation is generated to explain the results. That explanation is then subject to further testing.

Some theories are even meta-theories! Collins & Brown’s Cognitive Apprenticeship (a favorite) is based upon integrating several different theories, including the Reciprocal Teaching, Alan Schoenfeld’s work on examples in math, and the work of Scardemalia & Bereiter on scaffolding writing. And, of course, most theories have to account for others’ results from other frameworks if they’re empirically sound.

The approach I discuss in things like my Learning Experience Design workshops is a synthesis of theories as well. It’s an eclectic mix including the above mentioned, Cognitive Flexibility, Elaboration, ARCS, and more. If I were in a research setting, I’d be conducting experiments on engagement (pushing beyond ARCS) to test my own theories of what makes experiences as engaging and effective. Which, not coincidentally, was the research I was doing when I was an academic (and led to Engaging Learning). (As well as integration of systems for a ubiquitous coaching environment, which generates many related topics.)

While individual results, such as the benefits of relearning, are valuable and easy to point to, it’s the extended body of work on topics that provides for longevity and applicability. Any one study may or may not be directly applicable to your work, but the theoretical implications give you a basis to make decisions even in situations that don’t directly map. There’s the possibility to extend to far, but it’s better than having no guidance at all.

Having theories to hand that complement each other is a principled way to design individual solutions and design processes. Similarly for strategic work as well (Revolutionize L&D) is a similar integration of diverse elements to make a coherent whole. Knowing, and mastering, the valid and useful theories is a good basis for making organizational learning decisions. And avoiding myths! Being able to apply them, of course, is also critical ;).

So, while they’re complementary, in the choice between theory or research I’ll point to one having more utility. Here’s to theories and those who develop and advance them!

As one of the things I talk about, I was exploring the dimensions of difficulty for performance that guide the solutions we should offer. What determines when we should use performance support, automate approaches, we need formal training, or a blend, or…? It’s important to have criteria so that we can make a sensible determination. So, I started trying to map it out. And, not surprisingly, it’s not complete, but I thought I’d share some of the thinking.

As one of the things I talk about, I was exploring the dimensions of difficulty for performance that guide the solutions we should offer. What determines when we should use performance support, automate approaches, we need formal training, or a blend, or…? It’s important to have criteria so that we can make a sensible determination. So, I started trying to map it out. And, not surprisingly, it’s not complete, but I thought I’d share some of the thinking.

So one of the dimensions is clearly complexity. How difficult is this task to comprehend? How does it vary? Connecting and operating a simple device isn’t very complex. Addressing complex product complaints can be much more complex. Certainly we need more support if it’s more complex. That could be trying to put information into the world if possible. It also would suggest more training if it has to be in the head.

A second dimension is frequency of use. If it’s something you’ll likely do frequently, getting you up to speed is more important than maintaining your capability. On the other hand, if it only happens infrequently, it’s hard to try to keep it in the head, and you’re more likely to want to try to keep it in the world.

And a third obvious dimension is importance. If the consequences aren’t too onerous if there are mistakes, you can be more slack. On the other hand, say if lives are on the line, the consequences of failure raise the game. You’d like to automate it if you could (machines don’t fatigue), but of course the situation has to be well defined. Otherwise, you’re going to want lot of training.

And it’s the interactions that matter. For instance, flight errors are hopefully rare (the systems are robust), typically involve complex situations (the interactions between the systems mean engines affect flight controls), and have big consequences! That’s why there is a huge effort in pilot preparation.

It’s hard to map this out. For one, is it just low/high, or does it differentiate in a more granular sense: e.g. low/medium/high? And for three dimensions it’s hard to represent in a visually compelling way. Do you use two (or three) two dimensional tables?

Yet you’d like to capture some of the implications: example above for flight errors explains much investment. Low consequences suggest low investment obviously. Complexity and infrequency suggest more spacing of practice.

It may be that there’s no one answer. Each situation will require an assessment of the mental task. However, some principles will overarch, e.g. put it in the world when you can. Avoiding taxing our mental resources is good. Using our brains for complex pattern matching and decision making is likely better than remembering arbitrary and rote steps. And, of course, think of the brain and the world as partners, Intelligence Augmentation, is better than just focusing on one or another. Still, we need to be aware of, and assessing, the dimensions of difficulty as part of our solution. Am I missing some? Are you aware of any good guides?

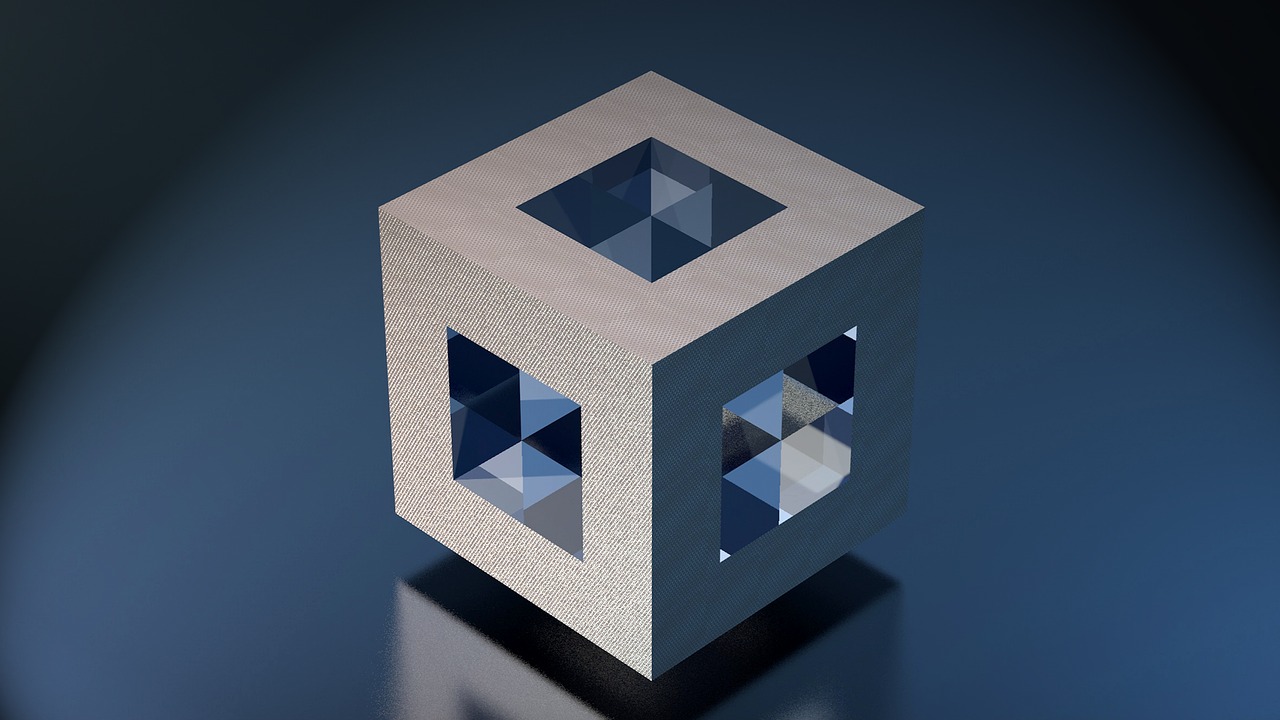

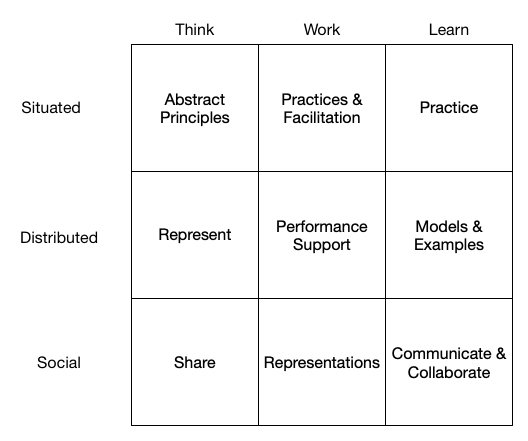

In my past two posts, I first looked at cognitions (situated, distributed, social) by contexts (think, work, and learn), and then the reverse. And, having filled out the matrixes anew, they weren’t quite the same. And that, I think, is the benefit of the exercise, a chance to think anew. So what emerged? Here’s the result of reconciling cognitions and contexts.

So, taking each cell back in the original pass of cognitions by contexts, what results? I took the Think row to, indeed, be Harold Jarche’s Seek > Sense > Share model (ok, my interpretation). We have in Situated, the feeds you’ve set up to see, and then the particular searches you need in the current context. Then, of course, you experiment and represent as ways to externalize thinking for Distributed. Finally, you share Socially.

So, taking each cell back in the original pass of cognitions by contexts, what results? I took the Think row to, indeed, be Harold Jarche’s Seek > Sense > Share model (ok, my interpretation). We have in Situated, the feeds you’ve set up to see, and then the particular searches you need in the current context. Then, of course, you experiment and represent as ways to externalize thinking for Distributed. Finally, you share Socially.

For Work, not practices but principles (and the associated practices therefrom) as well as facilitation to support Situated Work. Performance support is, indeed, the Distributed support for Work. And Socially, you need to collaborate on specific tasks and cooperate in general.

Finally, for Learning, for a Situated world you need (spread) contextualized practice to support appropriate abstraction of the principles. You want models and examples to support performance in the practice, as Distributed resources. And, finally, for Social Learning, you need to communicate (e.g. discussions) and collaborate (group projects).

What’s changed is that I added search and feeds, and moved experiment, in the Think row. I went to principles from practices to support performance in ambiguity, left performance support untouched, and stayed with collaborations and cooperation instead of just shared representations (they’re part of collaborate). And, finally, I made practice about contexts, went from blended learning to support materials for learning, and interpreted social assignments as communicating and collaborating.

The question is, what does this mean? Does it give us any traction? I’m thinking it does, as it shifts the focus in what we’re doing to support folks. So I think it was interesting and valuable (to my thinking, at least ;) to consider reconciling cognitions and contexts.

So, in my last post, I talked about exploring the links between cognitions on the one hand (situated, distributed, social), and contexts (aligning with how we think, work, & learn). I did it one way, but then I thought to do it another, to instead consider Contexts by Cognitions, to see if I came to the same elements. And they weren’t quite identical! So I thought I should share that thinking, and then come to a reconciliation. Thinking out loud, as it were.

So in this one, I swapped the headings, emptied the matrix, and took a second stab at filling them out, with a relatively clear mind. (I generated the first diagram several days ago and had been iterating on it, but not today. Today I was writing it up and was early in the process, so I came to it relatively free of contamination. And of course, not completely, but this is ‘business significance’, not ‘statistical significance’ ;). The resulting diagram appears similar, but also some differences.

So in this one, I swapped the headings, emptied the matrix, and took a second stab at filling them out, with a relatively clear mind. (I generated the first diagram several days ago and had been iterating on it, but not today. Today I was writing it up and was early in the process, so I came to it relatively free of contamination. And of course, not completely, but this is ‘business significance’, not ‘statistical significance’ ;). The resulting diagram appears similar, but also some differences.

When we consider Thinking by Situated, we’re talking about coping with emergent situations. I thought being guided by best principles would be the way to cope, abstracted models. I thought representation was key for distributing one’s thinking, and sharing of course for social.

Working Situatedly suggested having in-house practices and facilitation. Of course, Distributed support for Work is performance support. And working socially suggests shared representations.

Finally, learning situated suggests the need for much practice (across contexts, I now think). Distributed support for learning are models and examples. And social learning suggests communicating (e.g. discussions) and collaboration (group projects).

Interestingly, these results differ from my previous post. So, I think I’ll have to reconcile them. The fact that I did get different results, and it sparked some additional thinking, is good. The outcome of considering contexts by cognitions improved the outcomes, I think. And that’s worth thinking about!