I’ve been working on a learning design that integrates developing social media skills with developing specific competencies, aligned with real work. It’s an interesting integration, and I drafted a pedagogy that I believe accomplishes the task. It draws heavily on the notion of activity-based learning. For your consideration.

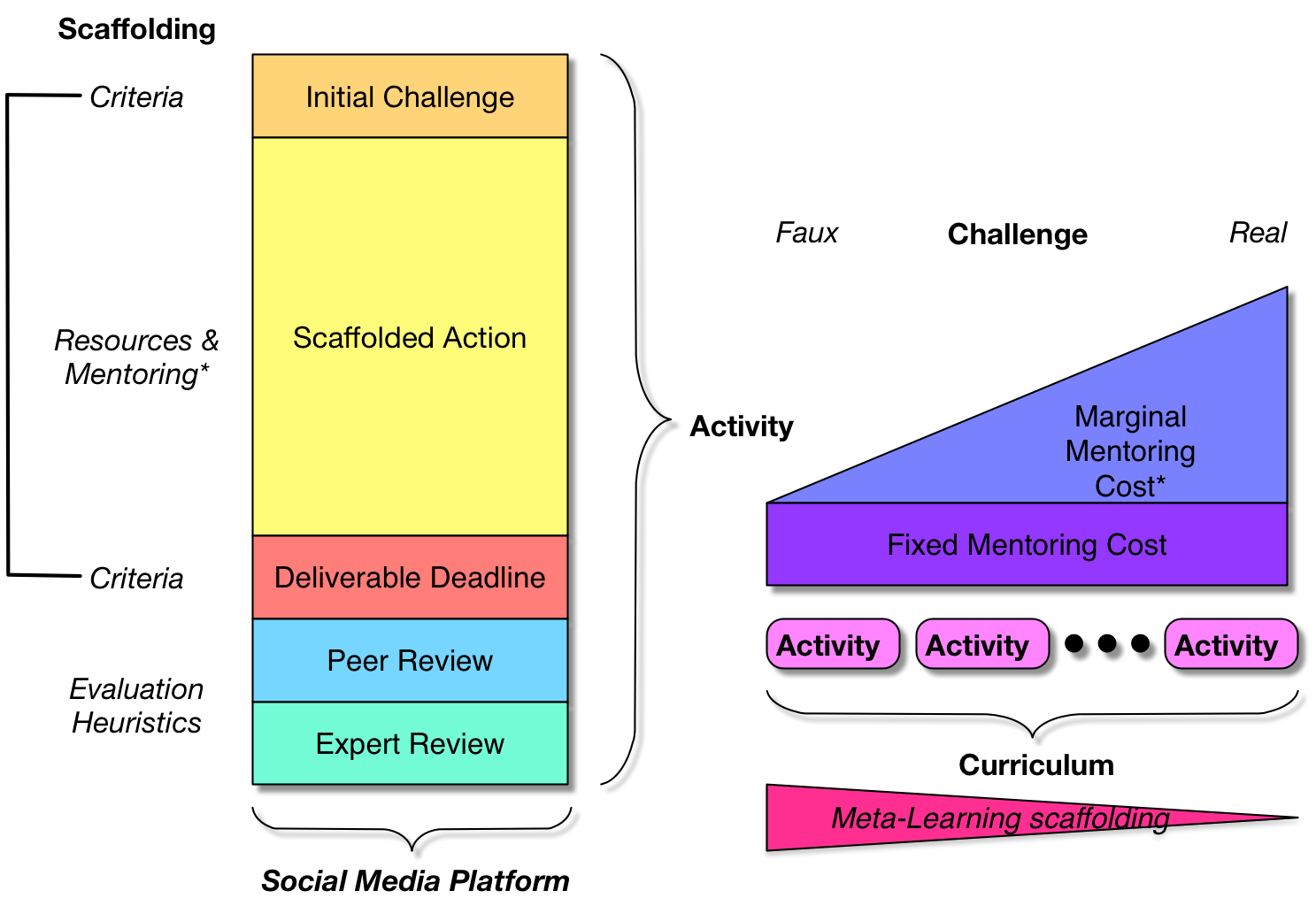

The learning process is broken up into a series of activities. Each activity starts with giving the learning teams a deliverable they have to create, with a deadline an appropriate distance out. There are criteria they have to meet, and the challenge is chosen such that it’s within their reach, but out of their grasp. That is, they’ll have to learn some things to accomplish it.

The learning process is broken up into a series of activities. Each activity starts with giving the learning teams a deliverable they have to create, with a deadline an appropriate distance out. There are criteria they have to meet, and the challenge is chosen such that it’s within their reach, but out of their grasp. That is, they’ll have to learn some things to accomplish it.

As they work on the deliverable, they’re supported. They may have resources available to review, ideally curated (and, across the curricula, their responsibility for curating their own resources is developed as part of handing off the responsibility for learning to learn). There may be people available for questions, and they’re also being actively watched and coached (less as they go on).

Now, ideally the goal would be a real deliverable that would achieve an impact on the organization. That, however, takes a fair bit of support to make it a worthwhile investment. Depending on the ability of the learners, you may start with challenges that are like but not necessarily real challenges, such as evaluating a case study or working on a simulation. The costs of mentoring go up as the consequences of the action, but so do the benefits, so it’s likely that the curriculum will similarly get closer to live tasks as it progresses.

At the deadline, the deliverables are shared for peer review, presumably with other teams. In this instance, there is a deliberate intention to have more than one team, as part of the development of the social capabilities. Reviewing others’ work, initially with evaluation heuristics, is part of internalizing the monitoring criteria, on the path to becoming a self-monitoring and self-improving learner. Similarly, the freedom to share work for evaluation is a valuable move on the path to a learning culture. Expert review will follow, to finalize the learning outcomes.

The intent is also that the conversations and collaborations be happening in a social media platform. This is part of helping the teams (and the organization) acquire social media competencies. Sharing, working together, accessing resources, etc. are being used in the platform just as they are used for work. At the end, at least, they are being used for work!

This has emerged as a design that develops both specific work competencies and social competencies in an integrated way. Of course, the proof is when there’s a chance to run it, but in the spirit of working out loud…your thoughts welcome.

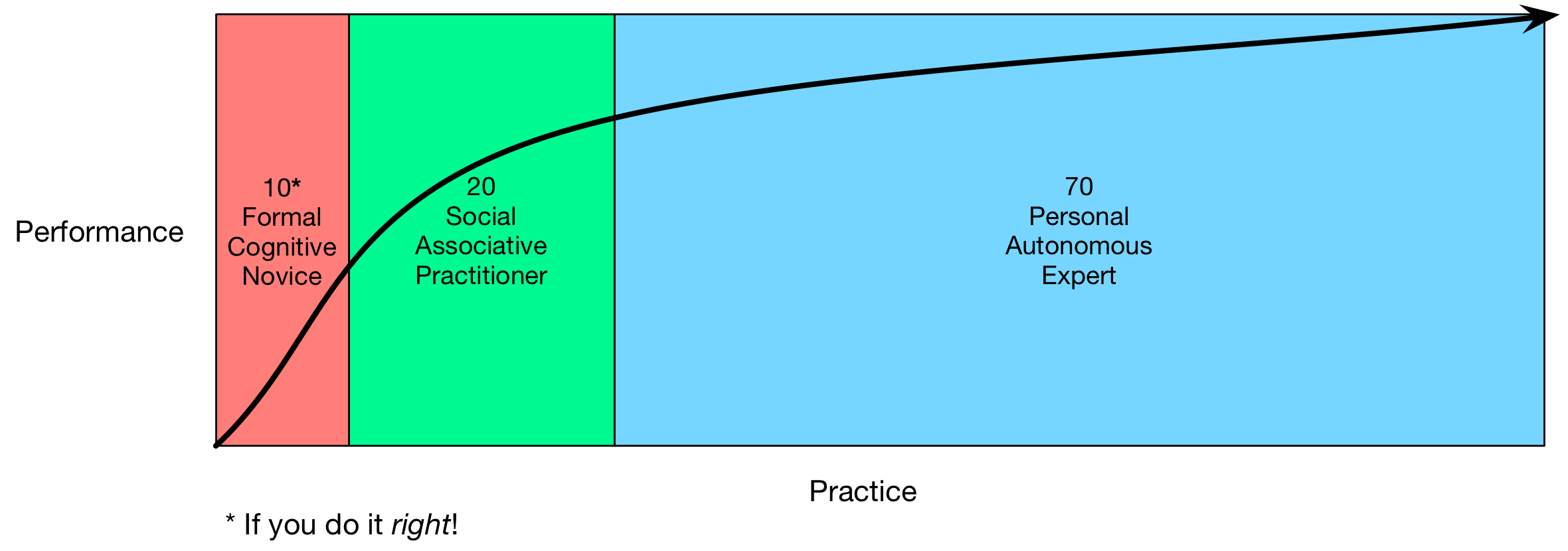

This, to me, maps more closely to 70:20:10, because you can see the formal (10) playing a role to kick off the semantic part of the learning, then coaching and mentoring (the 20) support the integration or association of the skills, and then the 70 (practice, reflection, and

This, to me, maps more closely to 70:20:10, because you can see the formal (10) playing a role to kick off the semantic part of the learning, then coaching and mentoring (the 20) support the integration or association of the skills, and then the 70 (practice, reflection, and