In general, in a learning experience stretching out over days (as spaced learning would suggest), learners want to regularly get feedback about how they’re doing. As a consequence, you want regular cycles of assessment. However, there’s a conflict. In workplace performance we produce complex outputs (RFPs, product specs, sales proposals, strategies, etc). These still typically require human oversight to evaluate. Yet resource limitations are likely in most such situations, so we prefer auto-marked solutions (read: multiple choice, fill-in-the-blank), etc. How do we reconcile meaningful assessment with realistic constraints? This is one of the questions I’ve been thinking about, and I thought I’d share my reflections with you.

In workplace learning, at times we can get by with auto-assessment, particularly if we use coaching beyond the learning event. Yet if it matters, we’d rather them practice things that matter before they actually are used for real work. And for formal education, we want learners to have at least weekly cycles of performance and assessment. Yet we also don’t want just rote knowledge checks, as they don’t lead to meaningful performance. We need some intermediate steps, and that’s what I’ve been thinking on.

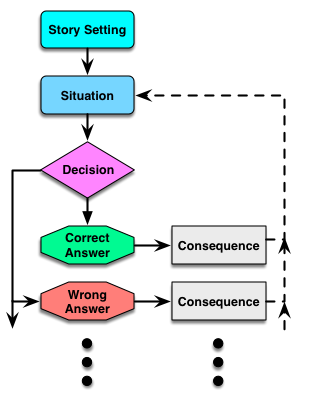

So first, in Engaging Learning, I wrote about what I called ‘mini-scenarios’. These are really just better-written multiple-choice questions. However, such questions don’t ask learners to identify definitions or the like (simple recognition), but instead put learners in contextual situations. Here, the learner chooses between different decisions. Which means retrieving the information, mapping it to the context, and then choosing the best answer. Such a question has a story context, a precipitating situation, and then alternative decisions. (And the alternatives are ways learners go wrong, not silly or obviously incorrect choices). I suggest that your questions should be like this, but are there more?

So first, in Engaging Learning, I wrote about what I called ‘mini-scenarios’. These are really just better-written multiple-choice questions. However, such questions don’t ask learners to identify definitions or the like (simple recognition), but instead put learners in contextual situations. Here, the learner chooses between different decisions. Which means retrieving the information, mapping it to the context, and then choosing the best answer. Such a question has a story context, a precipitating situation, and then alternative decisions. (And the alternatives are ways learners go wrong, not silly or obviously incorrect choices). I suggest that your questions should be like this, but are there more?

Branching scenarios are another, rich form of practice. Here it’s about tying together the decisions (they do tend to travel in packs) and consequences. When you do so, you can provide an immersive experience. (When designed well, of course.) They’re a pragmatic approximation of a full game experience. Full games are really good when you need lots of practice (or can amortize over a large audience), but they’re an additional level of complexity to develop.

Another one that Tom Reeves presented in an article was intriguing. You not only have to make the right choice, but then you also choose the reason why you made that choice. It’s only an additional step, but it gets at the choice and the thinking. And this is important. It would minimize the likelihood of guessing, and provide a richer basis for diagnosis and feedback. Of course, no one is producing a ‘question type’ like this that I know of, but it’d be a good one.

An approach we used in the past was to have learners create a complex answer, but have the learner evaluate it! In this case it was a verbal response to a question (we were working on speaking to the media), but then the learner could hear their own answer and a model one. Of course, you’d want to pair this with an evaluation guide as well. The learner creates a response, and then is presented with their response, a good response, and a rubric about what makes a good answer. Then we ask the learner to self evaluate against the rubric. This has the additional benefit that learners are evaluating work with guidance, and can internalize the behavior to become a self-improving learner. (This is the basis of ‘reciprocal teaching’, one of the component approaches in Cognitive Apprenticeship.)

Each of these is auto-(or self-) marked, yet provides valuable feedback to the learner and valuable practice of skills. Which shouldn’t be at the expense of also having instructor-marked complex work products or performances, but can supplement them. The goal is to provide the learner with guidance about how their understanding is progressing while keeping marking loads to a minimum. It’s not ideal, but it’s practical. And it’s not exclusive of knowledge test as well, but it’s more applied and therefore is likely to be more valuable to the learner and the learning. I’m percolating on this, but I welcome hearing what approaches (and reflections) you have.