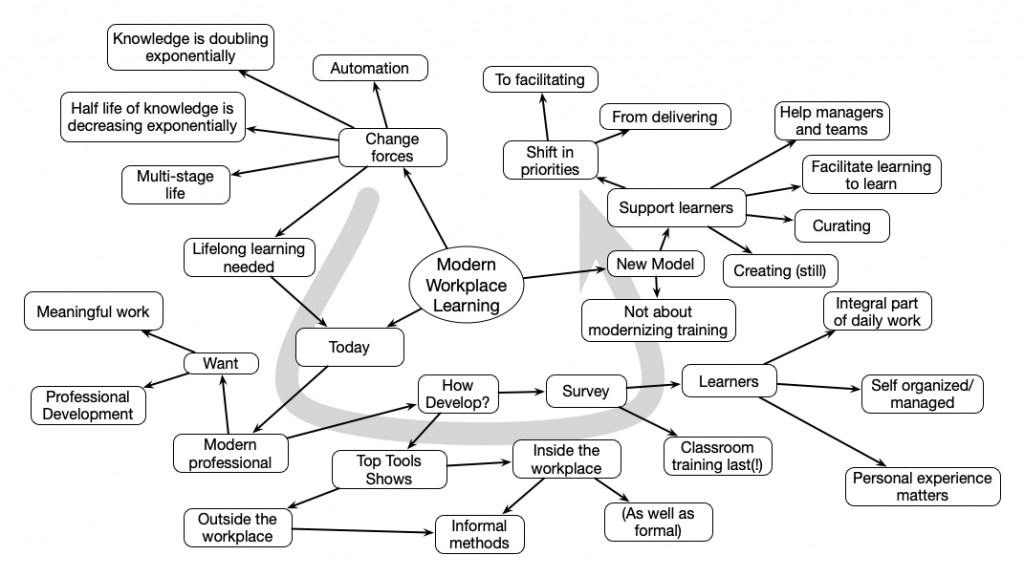

At the LearnTec conference, Jane Hart opened up the Modern Workplace Learning track with a thoughtful presentation about the rationale for MWL. She started by pointing out the changes that are driving the need. Jane identified what people are actually doing in their own learning to motivate the need for L&D change. She then characterized important elements that L&D should consider.

Archives for 2019

Y A (Yet Another) Misleading Mobile Marketing Post

Is this YAMMMP? I suppose I can’t address every one, but I think picking a few here and there are perhaps instructive. And, maybe, a bit fun. So there was a post on 5 mobile learning strategies. I’m a wee bit opinionated on mobile learning, so I thought I’d have a look. And, of course, it seems to be a random selection. I guess there’s a requirement to regularly put out stuff, but it seems they get someone to make stuff up scattershot, for the sake of marketing. And while the advice isn’t bad, it’s just random bits of advice trying to create the appearance of expertise. Worse, it’s really not specific to mobile, and, therefore,…misleading.

Is this YAMMMP? I suppose I can’t address every one, but I think picking a few here and there are perhaps instructive. And, maybe, a bit fun. So there was a post on 5 mobile learning strategies. I’m a wee bit opinionated on mobile learning, so I thought I’d have a look. And, of course, it seems to be a random selection. I guess there’s a requirement to regularly put out stuff, but it seems they get someone to make stuff up scattershot, for the sake of marketing. And while the advice isn’t bad, it’s just random bits of advice trying to create the appearance of expertise. Worse, it’s really not specific to mobile, and, therefore,…misleading.

- The first recommendation was to do ‘microlearning‘. The worst part was their definition: short suggest of learning and performance support. Let’s just throw everything together! Yes, small chunks of content are good. Because they match how our minds work. But this (differentiated) is not unique to mobile, it’s good advice over all! Of course, with nuances about the formal (e.g. not just putting your course through the shredder and stream out the bits).

- The next recommendation was for ‘gamification’. Er, no. Now to be fair, they do say “gamification for serious learning”, but how do we know whether they mean immersive learning environments, or points, badges, and leaderboards? The former’s good, the latter is, I suggest, not so valuable. But again, this is undifferentiated, so it’s not obviously good advice.

- On to the ubiquitous ‘video’! Yes, video can be valuable, but not generically. It can be overdone, and can intrude in a variety of ways. For instance, the audio might be inappropriate in certain contexts, and hands-free may require a visual focus that can’t be distracted. Moreover, using video appropriately again isn’t unique to mobile.

- And another statement that’s not unique to mobile: look to social learning. Yes, of course, social learning’s good. And, with mobile populations equipped with devices and ‘downtime’, it can be valuable. But it’s valuable regardless of device. When it’s possible, it can add value. The obvious rises again.

- And, finally, personalization. Yes, great. So personalize via the small chunks from microlearning. Again, why unique to mobile? Love the idea, but hate that it’s presented as part of a mobile strategy instead of a learning strategy.

Look, I’m a fan of mobile, obviously. But while mobile’s niche is performance support, what’s unique to mobile is context. Do something because of when and where you are. And this article has entirely missed it. And the other critical element is to think of mobile as a platform. It’s not a device, it’s not an app, it’s a unique delivery channel for many possibilities. Your initial exploration can be either of the microlearning components, but recognize that as soon as you use it, you’ll be expected to do more. And thinking platform is the key strategy here.

I understand that their intention is self-serving, these are things they can do. But pretending these are core strategies is misleading. And that’s the problem I’d like you to learn to detect. Go to the core affordances, and then drill down. I’ve talked about my own five mobile approaches, for instance. Don’t work up from what you can do until you know what that is doing to advance your capabilities as well. That is what’s strategic.

What to evaluate?

In a couple of articles, the notion that we should be measuring our impact on the business is called out. And being one who says just that, I feel obligated to respond. So let’s get clear on what I’m saying and why. It’s about what to evaluate, why, and possibly when.

So, in the original article, by my colleague Will Thalheimer, he calls the claim that we should focus on business impact ‘dangerous’! To be fair (I know Will, and we had a comment exchange), he’s saying that there are important metrics we should be paying attention to about what we do and how we do it. And no argument! Of course we have to be professional in what we do. The claim isn’t that the business measure is all we need to pay attention to. And he acknowledges that later. Further, he does say we need to avoid what he calls ‘vanity metrics’, just how efficient we are. And I think we do need to look at efficiency, but only after we know we’re doing something worthwhile.

The second article is a bit more off kilter. It seems to ignore the value of business metrics all together. It talks about competencies and audience, but not impacting the business. Again, the author raises the importance of being professional, but still seems to be in the ‘if we do good design, it is good’, without seeming to even check to see if the design is addressing something real.

Why does this matter? Partly because, empirically, what the profession measures are what Will called ‘vanity’ measures. I put it another way: they’re efficiency metrics. How much per seat per hour? How many people are served per L&D employee? And what do we compare these to? Industry benchmarks. And I’m not saying these aren’t important, ultimately. Yes, we should be frugal with our resources. We even should ultimately ensure that the cost to improve isn’t more than the problem costs! But…

The big problem is that we’ve no idea if that butt in that seat for that hour is doing any good for the org. We don’t know if the competency is a gap that means the org isn’t succeeding! I’m saying we need to focus on the business imperatives because we aren’t!

And then, yes, let’s focus on whether our learning interventions are good. Do we have the best practice, the least amount of content and it’s good, etc. Then we can ask if we’re efficient. But if we only measure efficiency, we end up taking PDFs and PPTs and throwing them up on the screen. If we’re lucky, with a quiz. And this is not going to have an impact.

So I’m advocating the focus on business metrics because that’s part of a performance consulting process to create meaningful impacts. Not in lieu of the stuff Will and the other author are advocating, but in addition. It’s all too easy to worry about good design, and miss that there’s no meaningful impact.

Our business partners will not be impressed if we’re designing efficient, and even effective learning, if it isn’t doing anything. Our solutions need to be targeted at a real problem and address it. That’s why I’ll continue to say things like “As a discipline, we must look at the metrics that really matter… not to us but to the business we serve.” Then we also need to be professional. Will’s right that we don’t do enough to assure our effectiveness, and only focus on efficiency. But it takes it all, impact + effectiveness + efficiency, and I think it’s dangerous to say otherwise. So what say you?

Redesigning Learning Design

Of late, a lot of my work has been designing learning design. Helping orgs transition their existing design processes to ones that will actually have an impact. That is, someone’s got a learning design process, but they want to improve it. One idea, of course, is to replace it with some validated design process. Another approach, much less disruptive, is to find opportunities to fine tune the design. The idea is to find the minimal set of changes that will yield the maximal benefit. So what are the likely inflection points? Where am I finding those spots for redesigning? It’s about good learning.

Starting at the top, one place where organizations go wrong right off the bat is the initial analysis for a course. There’s the ‘give us a course on this’, but even if there’s a decent analysis the process can go awry. Side-stepping the big issue of performance consulting (do a reality check: is this truly a case for a course), we get into working to create the objectives. It’s about how you work with SMEs. Understanding what they can, and can’t, do well means you have the opportunity to ensure that you get the right objectives to design to.

From there, the most meaningful and valuable step is to focus on the practice. What are you having learners do, and how can you change that? Helping your designers switch to good assessment writing is going to be useful. It’s nuanced, so the questions don’t seem that different from typical ones, but they’re much more focused for success.

Of course, to support good application of the content to develop abilities, you need the right content! Again, getting designers to understand what the nuances of useful examples from just stories isn’t hard but rarely done. Similarly knowing why you want models and not just presentations about the concept isn’t fully realized.

Of course, making it an emotionally compelling experience has learning impact as well. Yet too often we see the elements just juxtaposed instead of integrated. There are systematic ways to align the engagement and the learning, but they’re not understood.

A final note is knowing when to have someone work alone, and when some collaboration will help. It’s not a lot, but unless it happens at the right time (or happens at all) can have a valuable contribution to the quality of the outcome.

I’ve provided many resources about better learning design, from my 7 step program white paper to my deeper elearning series for Learnnovators. And I’ve a white paper about redesigning as well. And, of course, if you’re interested in doing this organizationally, I’d welcome hearing from you!

One other resource will be my upcoming workshop at the Learning Solutions conference on March 25 in Orlando, where we’ll spend a day working on learning experience design, integrating engagement and learning science. Of course, you’ll be responsible for taking the learnings back to your learning process, but you’ll have the ammunition for redesigning. I’d welcome seeing you there!

Locus of learning: community, AI, or org?

A recent article caused me to think. Always a great thing! It led to some reflections that I want to share. The article is about a (hypothetical) learning journey, and talks about how learning objects are part of that learning process. My issue is with the locus of the curation of those objects; should it be the organization, an AI, or the community? I think it’s worth exploring.

The first sentence that stood out for me made a strong statement. “Choice is most productive when it is scaffolded by an organizationally-curated framework.” Curation of resources for quality and relevance is a good thing, but is the organization is the best arbiter? I’ve argued that the community of practice should determine the curriculum to be a member of that community. Similarly, the resources to support progression in the community should come from the community, both within and outside the organization.

Relatedly, the sentence before this one states “learner choice can be a dangerous thing if left unchecked”. And this really strikes me as the wrong model. It’s inherently saying we don’t trust our learners to be good at learning. I don’t expect learners (or SMEs for that matter) to know learning. But then, we shouldn’t leave that to chance. We should be facilitating the development of learning to learn skills explicitly, having L&D model and guide it, and more. It’s rather an old school approach to think that the org (through the agency of L&D) needs to control the learning.

A second line that caught my eye was that the protagonist “and his colleagues create and share additional AI-curated briefings with each other.” Is that AI curation, or community curation? And note that there’s ‘creation’, not just sharing. I’m thinking that the human agency is more critical than the AI curation. AI curation has gotten good, but when a community is working, the collective intelligence is better. Or, if we’re talking IA (and we should be), we should explicitly looking to couple AI and community curation.

Another line is also curious. “However, learning leaders must balance the popularity of informal learning with the formal, centralized needs of the organization. This can be achieved using AI-curated real-time briefings.” Count me skeptical. I believe that if you address the important issues – purpose via meaningful work and autonomy to pursue, communities of practice, and learning to learn skills – you can trust informal learning more than AI or a central view of what learning can and should be.

Most of the article was quite good, even if things like “psychological safety” are being attributed to McKenzie instead of Amy Edmondson. I like folks looking to the future, and I understand that aligning with the status quo is a good business move. It’s just that when you get disconnects such as these, it’s an opportunity to reflect. And wondering about the locus of responsibility for learning is a valuable exercise. Can the locus be the individual and community, not the org or AI? Of course, better yet if we get the synergy between them. But let’s think seriously about how to empower learners and community, ok?

Bringing Transformation to Life

I’m going to be delivering a mobile learning course for a university this spring. Consequently, I’m currently beginning the design. I need to practice what I preach in the sense of good learning design, so I’m working through the usual decisions. The real question I have is whether I can make it transformative. There are limitations, but one of my mantras is about design having many possible development forms. So…I’ve got to make a good stab at it. Here’s my preliminary thinking on bringing the course to life.

I’ve actually been working a lot on the design of university learning experiences. There have been several instances in the past year or two that have really pushed my thinking in this space. This naturally includes application-based instruction, as well as meaningful (and minimal) content, and good assessment design. It’s been handy for this!

Naturally, Designing mLearning will be the text (The Mobile Academy is focused on formal education, and these students are focused on the workplace). As it turns out, the book isn’t written in the order I want to deliver the course. So while I’ll have specific readings each week, I’ll instead recommend that the students read it in one go (it’s not a long book), and that two different cuts through the material will be a better learning experience.

I’m also thinking about the assignments: having them do meaningful things. E.g. designing solutions. Also, in the right order, to facilitate useful processing. I suppose I should worry about whether the workload will be too much However, as it’s a compressed course the expectations per week are higher. And I will argue that I’m having them do more than consume, so the workload’s ok.

The important thing, to me, is getting the emotional trajectory right. I reckon I need an aspirational goal up front, and then ensure I deliver. It needs to work on a week-by-week basis and overall. I’m making sure they’re doing the right things, and then I’ll fine-tune. I like the chance to integrate my thinking and put a stake in the ground. It’ll be interesting to see how it goes.

As a side note, mobile seems (to me) to be resurrecting. I would think it’s now mainstream, but some folks are still getting started. Hey, it makes sense, so better late than never! Where are you in mobile?

A foolish inconsistency

Here, a foolish inconsistency is the hobgoblin of my little mind. While there are some learnings in here (for me and others), it’s really just getting stuff off my chest. Feel free to move along. This is just a lack of consistency that I suggest is unnecessary and ill-conceived.

I’ve hinted at this before, but I don’t think I’ve gone into detail. I like LinkedIn. It’s a useful augment for business networking. However, what drives me nuts is the inconsistency between the device app and the web interface. One instance is sufficient: messaging someone you’ve just connected to.

So, on the device, if you link to someone, you immediately get a notice and a link to send them a message. And I like that, since I like to send a quick followup to everyone I link to (a trick I learned from a colleague). On the device, it goes straight to the messaging interface. Perfect. Now, from the invitations on the app that I want to query (e.g. it’s not clear why they’ve linked) or to explain why I won’t (I generally don’t link to orgs, for instance), I can’t do that, but that’s ok, it can wait ’til I’m on my laptop using the (richer) web app.

On the web version, when I accept a link, I’m also offered the chance to message them, but here’s the trick: it’s not a message, it’s an InMail! And, of course, those are limited. I don’t want to use my InMails on messaging someone I’m already linked to. (I don’t use them in general, but that’s a separate issue.). WHY can’t they go to messages like the app? That’d be consistent, and this is a worse default than using messages. I get that the app would have more limited functionality in return for being an app (there’re benefits, like notifications), but why would the full web version do things that are contrary to your interests and intentions?!?!

Good design says consistency is a good thing, generally; certainly aligning with user expectations and best interests. It’s bad design to do something that’s unnecessarily wasteful. There are lots of such irritations: web forms that only tell you the expected format after you get it wrong instead of making it easy to point to the answer or give you a clue and sites with mismatched security (overly complex for unessential data or vice-versa) are just two examples. This one, however, continues to be in my face regularly.

This inconsistency is instead a hobgoblin of a sensible mind. Has this irritated you, or what other silly designs bedevil you?

PSA SPF

We interrupt your regularly scheduled blog series for this important public service announcement:

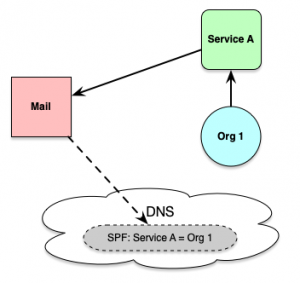

A number of times now, I’ve discovered that there was email being sent to me that I was not getting. Fortunately, my ISP is also a colleague, mentor, and friend and a real expert in cybersecurity, so I asked him. And he explained it to me (and then again when I’d forgotten and it happened again; sorry Sky!). So I’ll document it here so I can point to it in further instances. And it’s about domains and SPF, so it’s a wee bit geeky (and at the edge of my capability). Yet it’s also important for reducing spam, and I’m all for that. So here we go.

A number of times now, I’ve discovered that there was email being sent to me that I was not getting. Fortunately, my ISP is also a colleague, mentor, and friend and a real expert in cybersecurity, so I asked him. And he explained it to me (and then again when I’d forgotten and it happened again; sorry Sky!). So I’ll document it here so I can point to it in further instances. And it’s about domains and SPF, so it’s a wee bit geeky (and at the edge of my capability). Yet it’s also important for reducing spam, and I’m all for that. So here we go.

This started with an organization where I had been conversing with individuals. And eventually it became clear that they had sent me a form letter, as part of a bigger mailing, and assumed I had it while I was still asking about details in said form letter. Debugging this is how I found out what happened.

Now, when an org sends you email directly, your mail system tracks the paths it takes to get to you. If it goes back to the server for the org says the mail’s from, all’s good. For certain types of mails (e.g. event-related or service-related), however, those mails are sent via a service. A good mail server should check to see if the mail the service claims is really from the org. Otherwise, you could have a lot of people sending things pretending to be from one place but … can you say ‘spam’? Right.

So, what the org needs to do is create a really simple one-line bit of text in something called a Sender Policy Framework (SPF) record that says “they mail on my behalf”. E.g. the record lets the org publish a list of IP addresses or subnets that are authorized to send email on their behalf. And, seriously, this is simple enough that I can do it.

Yet somehow, some orgs don’t do this. Now, some mailers don’t check, but they should! That check to the DNS entry on behalf of the org to see if there’s an SPF covering the service will help reduce spam. So my ISP checks rigorously. And then I miss mail when people haven’t done the right thing in their tech set up. When I have this type of problem, it’s pretty much one of these.

Please, please, do check that your orgs get this right if they do use a service. That would be orgs doing mailing lists through external providers (e.g. small firms without the resources to purchase bulk mail systems). And you can ignore this if it doesn’t apply to you, but if you do have the symptoms, feel free to point people here to help them understand what to fix. I certainly will!

We now return you to your regularly scheduled blog, already in progress.