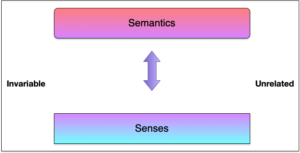

Once again facing folks who aren’t using styles, I was triggered to think more deeply about the underlying principle. That is, to separate content from description. It’s a step forward in what we can do with systems to bring about a more powerful human-aligned system.

And, as always, here’s the text, in case you (like me) prefer to read ;).

I‘ve ranted before about styles, but I want to make a slightly different pitch today. It‘s not just about styles, it‘s about the thinking behind it. The point is to separate content from description.

So, the point about styles is that they‘re a definition of formatting. You have elements of documents like headings at various levels, and body text, and special paragraphs like quotes, and so on. Then you have features, like font size, bolding and italics, color, etc. And what you see, too often, is people hand-formatting documents, choosing to do headers by increasing the font size, bolding, etc. And, importantly, having to go through and change them all manually if there‘s a desire for a change in look.

The point of styles is instead merely to say this is a heading 1, this is a figure, this is a caption, and so on. Then, you separately say: heading 1s will be font size 16 bold and left-justified. Figures and captions will be centered, in font size 12. And so on. Then, should someone want to change how the document‘s formatted, you just change the definition of heading 1, and all the heading ones change.

It goes further. You can define that all heading ones have a page break before (e.g. a new chapter in a book). And you can define new styles, like for a callout box (e.g. colored background), etc. You can have different heading ones for a book than for a white paper. And some styles can be based on others. So your headings can use the same font as your body text, and if you want it all to change, you change the source and the rest will change.

Which is wonderful for writing, but the concept behind this is what‘s really important to get your head around. That is, separating out role from description. That‘s what‘s led me to be keen on content systems. The notion of pulling up content by description instead of hardwiring together content into an experience is the dream.

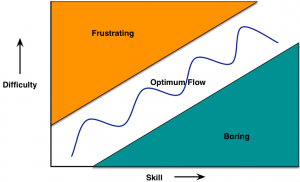

It‘s all about beginning to use semantics, that is the meaning of things, as a manipulable tool. Many years ago, I led a project creating an adaptive learning system. We were going to have content objects defined by topic, and learning role, and tagged in terms of media, difficulty, and more. So you could say: “pull a video on an example of diversity set in a sales officeâ€. Our goal, with a suite of rules about what when to move up or back in difficulty, was to specify what learning content the learner should see next, etc.

This is how adaptive platforms work. When Amazon or Netflix make a recommendation for you, there‘s not someone watching your behavior, instead it‘s a set of rules matching your particular actions to content recommendations. If you‘ve ordered a lot of British mysteries, and you haven‘t seen a particular series that lots of other people like, it‘ll be likely to be offered to you.

This is the opportunity of the future. We can start doing this with learning (and coaching)! We can start pulling together your learning goals, job role, current progress, current location (in time and space), etc, and offer you particular things that are appropriate for you. And, like our learning system, it might be recommendations of content, but you can choose others, or ignore, or…As Wayne Hodgins used to say, present the ‘right stuff‘: the right content to the right person at the right time in the right way….

The point being, just like styles, if we stop hardwiring things together, hand-formatting learning experiences, we can start offering personalized and even adaptive learning. Yes, there are technical backend issues, and more rigor in development, but this is the direction we can, and should, go. At least, that‘s my proposal, what say you?