Another reflection, triggered by my visit to DevLearn. One of the things that matters, and we don’t discuss enough, is analysis. That is, starting up front to determine what we need! There are nuances here, and I’m not a total expert (paging Dawn Snyder), but certain things are obvious, So let’s take some time analyzing analysis.

Analysis is the first part of the process. Yes, there’re the organizing and managing bits, but the process starts with analysis, whether ADDIE, SAM, LLAMA, or any other acronym. You need to determine what’s going on, what’s the need, and what’s the appropriate remedy.

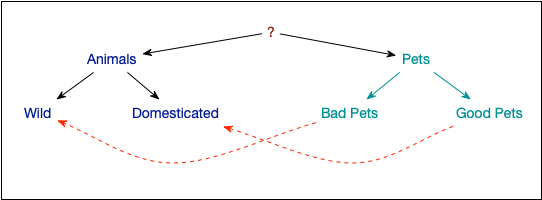

One of the first things to note is that not everything L&D does fits. As is widely noted (e.g. here), there are lots of reasons courses aren’t the only answer. The real trigger should be a need. That is, there’s a new skillset required to do this thing we’ve identified as wrong or necessary. Or, there’s something we’re doing, but badly. At core, there are two situations: the one where we need to be, and the one where we are. The gap between is what we want to remedy.

Then, it’s matter of determining why we’re not where we want to be. The reason is, there are different interventions for different problems, as Guy Wallace talks about in his tome. It might be a lack of resources, or people get rewards for doing X, even though it’s Y they’re to be doing. These, by the way, aren’t things we deal with! That’s why you do this, so you don’t build a solution where said solution actually isn’t.

When it is a situation where knowledge in the world, or in the head, will help, then we can jump into action. Of course, we need a clear definition of what it is people need to be able to do, under what conditions, etc. BTW, what we need are performance objectives, not ‘learning’ objectives. That is, it’s about doing. Which is why, if the circumstances support, we should be providing job aids, not courses! You’ll usually find that job aids are cheaper to do than courses. If it’s not being performed very frequently, or too frequently, memory will play a role, and external memory is valuable in many such circumstances.

When you’ve determined that a course is needed, you can develop that. HOWEVER, you need certain things from the analysis phase here too. In short, you need to understand the actual performance. That includes what the performance should be, and how you can tell. Essentially, you need to know the decisions people must make to deliver the required outcomes. Which involves knowing the models that describe how the world works in this particular area, what ways people go wrong and why, and why people should care. This is where you need your subject matter experts (SMEs). Then you can build your practices that align, and the models and examples, and then the hook and closing, and…

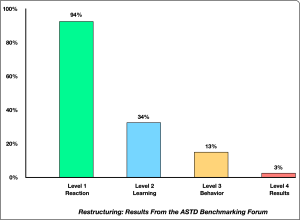

Whatever it is, ideally there’s a metric, that says this is what’s needed. You design to that metric, and then test until you achieve it. If you’re not achieving it faster than you’re losing resources, you can consciously evaluate. Is the lower level ok? Can we get more resources? Should we abandon ship? But doing so consciously is better than just going ’til you run out of time and/or money.

Analysis is a necessary first step. What is not is responding with acquiescence to a ‘we need a course on X’ request. Do you trust them to know that a course on X solves their problem? (Not the way to bet.) You can, and should, say, “yes, and…let’s dig in and make sure we’re solving the right problem”. Analysis is, properly, the way to start looking at problems. You understand what the gap is, then the root cause, and then align an intervention, or interventions, to address it. By analyzing analysis, we can figure out what we have to do, and why.

And, yes, I just gave a talk on designing in the real world, and you may have to do inference on resources to determine all the above, but at least you know what you need to come away with.