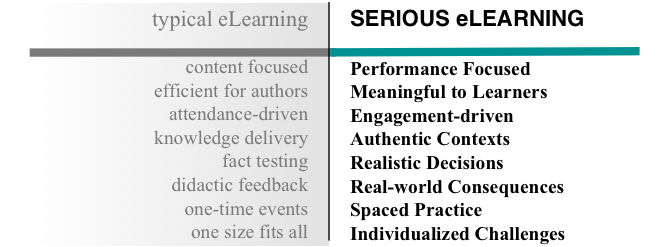

…and what’s most important to fix? I was a co-conspirator on the Serious eLearning Manifesto, and we identified 8 values that separated typical elearning from serious elearning. However, I suspect that not all are as important, nor hard to fix. And, thinking about what my unique contribution could and should be, I wondered where best to target my efforts to avoid going most wrong. I have some thoughts, but…

First, I’d like to ask you two questions:

- What are the best inflection points to improve learning?

- Which would you most want to have help in addressing?

Note that they might be two completely different things.

Now, it could be a number of things. Any one of the eight could be problematic. And it might be another that’s where you most would like help.

Now, it could be a number of things. Any one of the eight could be problematic. And it might be another that’s where you most would like help.

Is it getting the right objectives in the first place? We might fail to do the proper performance consulting. Thus, we’d be developing learning solutions that aren’t going to meet the need.

Another possibility is that we’re not providing the right support. We’re not providing useful models and examples instead of a content dump with what’s to hand.

We might not be helping learners understand why they should care. Are we missing out on developing motivation? Making it meaningful?

Another problem might be giving them abstract concepts instead of concrete practice. Are we asking them to do things in situations they recognize?

Also, we could be asking for them to recite knowledge back to us instead of applying it. Are we asking them to make decisions like we need them to make after the learning experience?

And we might be giving them simple feedback like “right” and “wrong” instead of providing them first with the consequences of their actions. And, we could be ensuring that the alternatives represent some real ways people go wrong, and providing feedback that addresses those specific misconceptions.

There’s also the possibility (probability?) that we’re not spacing out the learning. We could still be using the ‘event’ model, not reactivating the knowledge as appropriate.

And, of course, we might not be individualizing the challenges. We could be adapting to demonstrated learner capability. Are we?

Not only might one or more of these be the biggest contributor to a lack of learning impact, but some might be more challenging than others to address. And, of course, which ones should I be focusing on? I do address all in a variety of ways (c.f. the learning science 101 session I’ll be doing for the Learning Development Conference), but I’m thinking of focusing in.

And I have an idea where we may be going most wrong. But first, I’d like to hear your ideas. I’ll weigh in next week. And, of course, I could be wrong. So let me know!