In the process of looking at ways to improve the design of courses, the starting point is good objectives. And as a consequence, I‘ve been enthused about the notion of competencies, as a way to put the focus on what people do, not what they know. So how do we do this, systematically, reliably, and repeatably?

Let‘s be clear, there are times we need knowledge level objectives. In medicine or any other field where responses need to be quick and accurate, we need a very constrained vocabulary. SO drilling in the exact meanings of words is valuable, as an example. Though ideally, that‘s coupled with using that language to set context or make decisions. So “we know it‘s the right medial collateral ligament, prep for the surgery†could serve as a context, or we could have a choice to operate on the left or right atrial ventricle as a decision point. As Van Merriënboer‘s 4 Component Instructional Design talks about, we need to separate out the knowledge from the complex problems we apply it to. Still, I suggest that what‘s likely to make a difference to individuals and organizations is the ability to make better decisions, not recite rote knowledge.

So how do we get competencies when we want them? The problem, as I‘ve talked about before, is that SMEs don‘t have access to 70% of what they actually do, it‘s compiled away. We then need good processes, so I‘ve talked to a couple of educational institutions doing competencies, to see what could be learned. And it‘s clear that while there‘s no turnkey approach, what‘s emerging is a process with some specific elements.

One thing is that if you‘re trying to cover a whole college level course, you‘ve got to break it up. Break down the top level into a handful of competencies. Then you continue to take each of those apart, and perhaps another level, ‘til you have a reasonable scope. This is heuristic, of course, but with a focus on ‘do‘, you have a good likelihood to get here.

One of the things I‘ve heard across various entities trying to get meaningful objectives is working with more than one SME. If you can get several, you have a better chance of triangulating on the right outcomes and objectives. They may well disagree about the knowledge, but if you manage the process right (emphasize ‘do‘, lather, rinse, repeat), you should be able to get them to converge. It may take some education, and you may have to let them get the

Not just any SMEs will do. Two things are really valuable: on the ground experience to know what needs to be done (and doesn‘t), and the ability to identify and articulate the models that guide the performance. Some instructors, for instance, can teach to a text but really aren‘t truly masters of the content nor are experienced practitioners. Multiple helps, but the better the SME, the better the outcome.

I believe you want to ensure that you‘re getting both the right things, and all the things. I‘ve recommended to a client about triangulating not just with SMEs, but with practitioners (or, rather, the managers of the roles the learners will be engaged in), and any other reliable stakeholders. The point is to get input from the practice as well as the theory, identifying the models that support proper behavior, and the misconceptions that underpin where they go wrong.

Once you have a clear idea of the things people need to be able to do, you can then identify the language for the competencies. I‘m not a fan of Bloom‘s (unwieldy, hard to reliably apply), but I am a fan of Mager-style definitions (action, context, metric).

After this is done, you can identify the knowledge needed, and perhaps created objectives for that, but to me the focus is on the ‘do‘, the competencies. This is very much aligned with an activity-based learning model, whereby you immediately design the activities that align with the competencies before you decide the content.

So, this is what I‘m inferring. There would be good tools and templates you could design to go with this, identifying competencies, misconceptions, and at the same time also getting stories and motivations. (An exercise left for the reader. ;) The overall goal, however, of getting meaningful objectives is key to getting good learning design. Any nuances I‘m missing?

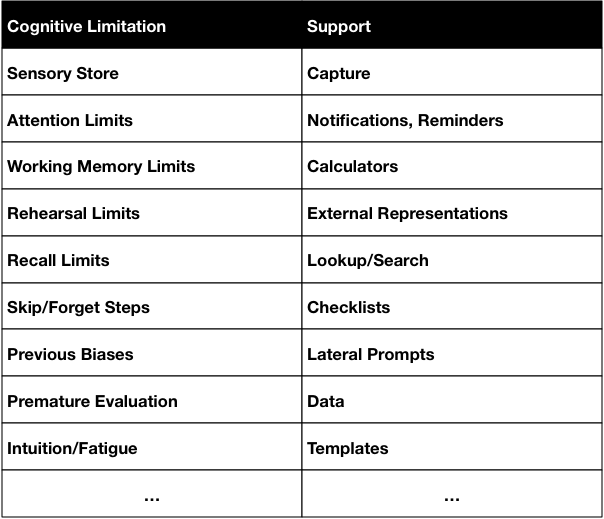

So, for instance, our senses capture incoming signals in a sensory store. Which has interesting properties that it has almost an unlimited capacity, but for only a very short time. And there is no way all of it can get into our working memory, so what happens is that what we attend to is what we have access to. So we can’t recall what we perceive accurately. However, technology (camera, microphone, sensors) can recall it all perfectly. So making capture capabilities available is a powerful support.

So, for instance, our senses capture incoming signals in a sensory store. Which has interesting properties that it has almost an unlimited capacity, but for only a very short time. And there is no way all of it can get into our working memory, so what happens is that what we attend to is what we have access to. So we can’t recall what we perceive accurately. However, technology (camera, microphone, sensors) can recall it all perfectly. So making capture capabilities available is a powerful support.