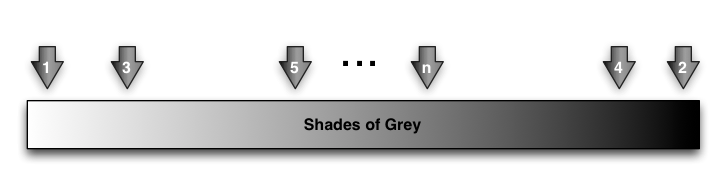

In looking across several instances of training in official procedures, I regularly see that, despite bunches of regulations and guidelines, that things are not black and white, but that there are myriad shades of grey. And I think that there is probably a very reasonable way to deal with it. (Surely you didn’t think I was talking about a book!)

In these situations, there are typically cases that are very white, others that are very black, but most end up somewhere in the middle, with a fair degree of ambiguity. And the concerns of the governing body are various. In one instance, the body was more concerned that you’d done due diligence and could show a trail of the thinking that led to the decision. If you did that, you were ok, even if you ended up making the wrong decision. In another case, the concern was more about consistency and repeatability. You didn’t want to show bias.

However, the training doesn’t really reflect that. In many cases, they point out the law (in the official verbiage), you work through some examples, and you’re quizzed on the knowledge. You might even workshop a few examples. Typically, you are to get the ‘right answer’.

I’d suggest that a better approach would be to give the learners a series of examples that are first workshopped by small groups, with their work brought back to the class. The important things are the ways the discussion is facilitated, supported, and the choice of problems. First, I think they’re given the problems and the associated requirements, guidelines, or regulations. Period. No presentation beforehand, nothing except reactivating the relevance of this material to their real work.

I’m suggesting that the first problem they face be, essentially, ‘white’, and the second is ‘black’ (or vice versa). The point is for them to see what the situation looks like when it’s very clear, and for them to get used to using the materials to make a determination. (This is likely what they’re going to be doing in real practice anyway!) At this point, the discussion facilitation is focused on helping them understand how the rules play out in the clear cases.

I’m suggesting that the first problem they face be, essentially, ‘white’, and the second is ‘black’ (or vice versa). The point is for them to see what the situation looks like when it’s very clear, and for them to get used to using the materials to make a determination. (This is likely what they’re going to be doing in real practice anyway!) At this point, the discussion facilitation is focused on helping them understand how the rules play out in the clear cases.

Then they start getting grayer cases, ones where there’s more ambiguity. Here, the focus of discussion facilitation is to start emphasizing the subtext: either ‘document your work’, or ‘be consistent’, or whatever. The amount of these will depend on how much practice they need. If the decisions are complex, they’re relatively infrequent, or the decisions are really important, they’ll need more practice.

This way, the learners are a) getting comfortable with the decisions, b) getting used to using the materials to make the decisions, and c) recognizing what’s really important.

I’m relatively certain that this may be problematic for some of the SMEs, who may prefer to argue for right/wrong answers, but I think it reflects the reality when you unpack the thinking behind the way it plays out in practice. And I think that’s more important for the learners, and the training organization, to recognize.

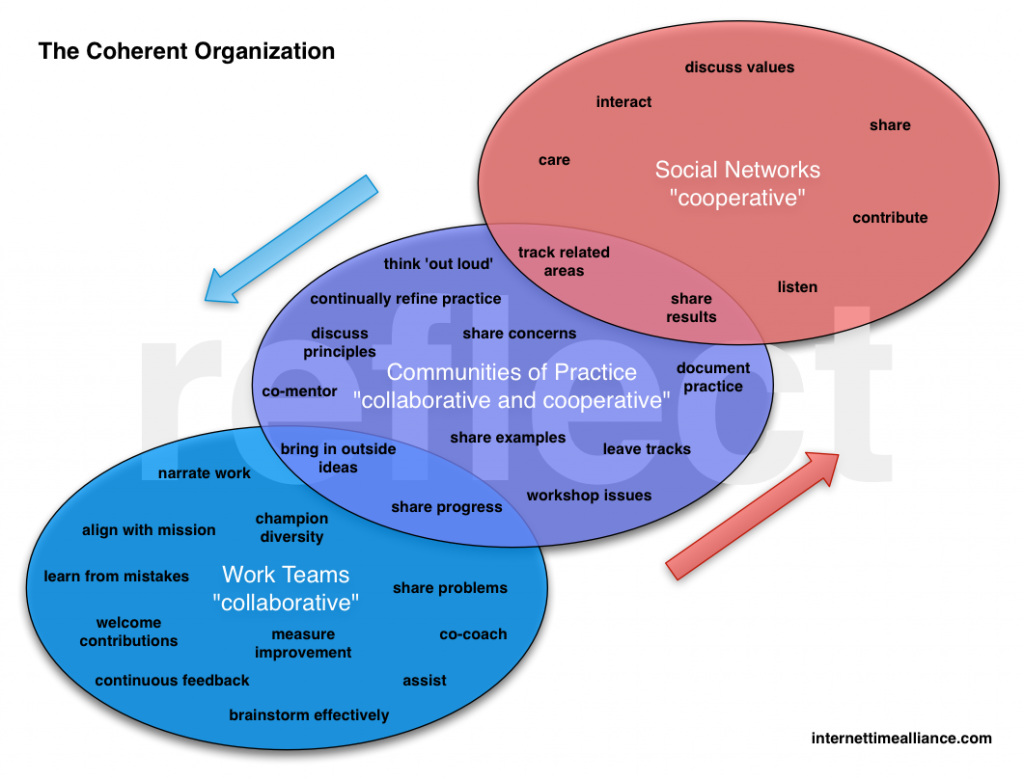

Of course, as they work in groups, the most valuable way to support them may be for them to have the coordinates of other members of their group to call on when they face really tough decisions. That sort of collaboration may trump formal instruction anyway ;).