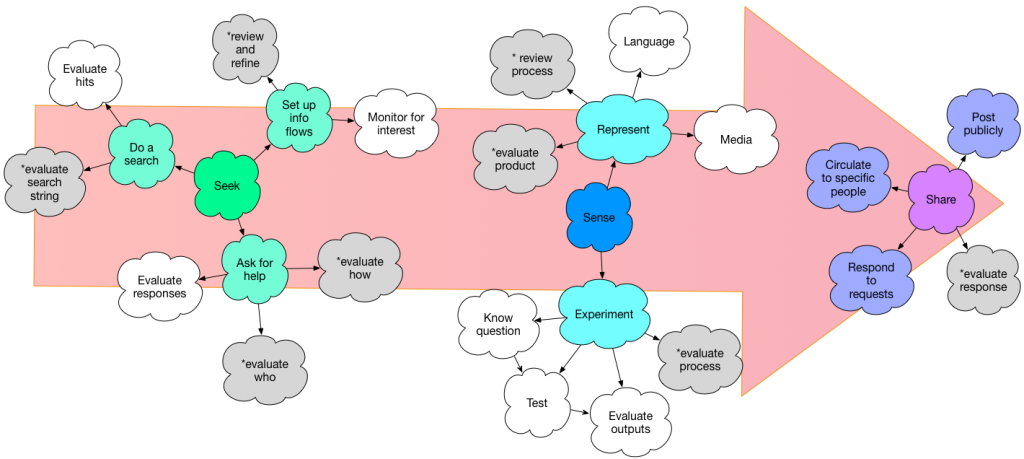

A number of years ago, I tried a stab at an innovation process. And I was reminded of it thinking about personal learning, and looked at it again. And it doesn’t seem to aged well. So I thought I’d revisit the model, and see what emerged. So here’s a mindmap of personal learning, and the associated thinking.

The earlier 5R model was based on Harold Jarche’s Seek-Sense-Share model, a deep model that has many rich aspects. I had reservations about the labels, and I think it’s sparse at either end. (And, I worked to hard to try to keep it to ‘R’s, and Reify just doesn’t work for me. ;)

In this new approach, I have a richer representation at either end. My notion of ‘seek’ (yes, I’m still using Harold’s framework, more at the end) has three different aspects. First is ‘info flows’. This is setting up the streams you will monitor. They’re filters on the overwhelming overload of info available. They’re your antenna for resonating with interesting new bits. You can also search for information, using DuckDuckGo or Google, or going straight to Wikipedia or other appropriate information resources you know. And, of course, you can ask, using your network, or Quora, or in any social media platform like LinkedIn or Facebook. And there’re are different details in each.

To make sense of the information, you can do either or both of representing your understanding and experimenting. Representing is a valuable way to process what you’re hearing, to make it concrete. Experimenting is putting it to the test. And you naturally do both; for instance read a web page telling you how to do something that’s new, and you put it into practice and see if it works. Both require reflection, but getting concrete in trying it out or rendering it is valuable. Again, representing and experimenting break down into further details.

What you learn can (and often should) be shared. At whatever stage you’re at, there’s probably someone who would benefit from what you’ve learned. You can post it publicly (like this blog), or circulate it to a well-selected set of individuals (and that can range from one other person to a small group or some channel that’s limited). Or you can merely have it in readiness so that if someone asks, you can point them to your thoughts. Which is different than pointing them to some other resource, which is useful, but not necessarily learning. The point is to have others providing feedback on where you’re at.

I looked at Harold’s model more deeply after I did this exercise (a meta-learning on it’s own; take your own stab and then see what others have done). I realize mine is done on sort of a first-principles basis from a cognitive perspective, while his is richer, being grounded in others’ frameworks. Harold’s is also more tested, having been used extensively in his well-regarded workshop.

I note that part of the meta-learning here is the ongoing monitoring of your own processes (the starred grey clouds). This is a key part of Harold’s workshop, by the way. Looking at your processes and evaluating them. An early exercise where you evaluate your own network systematically, for instance, struck me as really insightful. I’m grateful he was willing to share his materials with me.

So, this has been my sensing and sharing, so I hope you’ll take the opportunity to provide feedback! What am I missing?