I recently came across an article ostensibly about branching scenarios, but somehow the discussion largely missed the point. Ok, so I can be a stickler for conceptual clarity, but I think it’s important to distinguish between different types of scenarios and their relative strengths and weaknesses.

So in my book Engaging Learning, I was looking to talk about how to make engaging learning experiences. I was pushing games (and still do) and how to design them, but I also wanted to acknowledge the various approximations thereto. So in it, I characterized the differences between what I called mini-scenarios, linear scenarios, and contingent scenarios (this latter is what’s traditionally called branching scenarios). These are all approximations to full games, with various tradeoffs.

At core, let me be clear, is the need to put learners in situations where they need to make decisions. The goal is to have those decisions closely mimic the decisions they need to make after the learning experience. There’s a context (aka the story setting), and then a specific situation triggers the need to make a decision. And we can deliver this in a number of ways. The ideal is a simulation-driven (aka model-driven or engine-driven) experience. There’s a model of the world underneath that calculates the outcomes of your action and determines whether you’ve yet achieved success (or failure), or generates a new opportunity to act. We can (and should) tune this into a serious game. This gives us deep experience, but the model-building is challenging and there are short cuts.

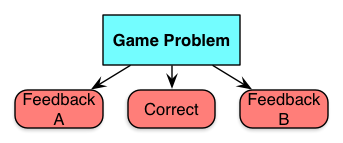

In mini-scenarios, you put the learner in a setting with a situation that precipitates a decision. Just one, and then there’s feedback. You could use video, a graphic novel format, or just prose, but the game problem is a setting and a situation, leading to choices. Similarly, you could have them respond by selecting option A B or C, or pointing to the right answer, or whatever. It stops there. Which is the weakness, because in the real world the consequences are typically more complex than this, and it’s nice off the learning experience reflects that reality. Still, it’s better than knowledge test. Really, these are just a better written multiple choice question, but that’s at least a start!

In mini-scenarios, you put the learner in a setting with a situation that precipitates a decision. Just one, and then there’s feedback. You could use video, a graphic novel format, or just prose, but the game problem is a setting and a situation, leading to choices. Similarly, you could have them respond by selecting option A B or C, or pointing to the right answer, or whatever. It stops there. Which is the weakness, because in the real world the consequences are typically more complex than this, and it’s nice off the learning experience reflects that reality. Still, it’s better than knowledge test. Really, these are just a better written multiple choice question, but that’s at least a start!

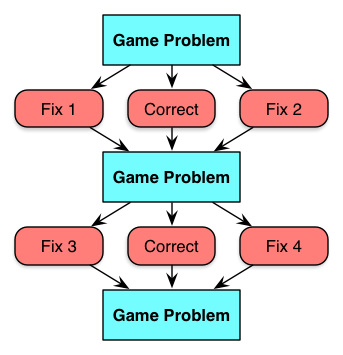

Linear scenarios are a bit more complex. There are a series of game problems in the same context, but whatever the player chooses, the right decision is ultimately made, leading to the next problem. You use some sort of sleight of hand, such as “a supervisor catches the mistake and rectifies it, informing you…” to make it all ok. Or, you can terminate out and have to restart if you make the wrong decision at any point. These are a step up in terms of showing the more complex consequences, but are a bit unrealistic. There’s some learning power here, but not as much as is possible. I have used them as sort of multiple mini-scenarios with content in between, and the same story is used for the next choice, which at least made a nice flow. Cathy Moore suggests these are valuable for novices, and I think it’s also useful if everyone needs to receive the same ‘test’ in some accreditation environment to be fair and balanced (though in a competency-based world they’d be better off with the full game).

Linear scenarios are a bit more complex. There are a series of game problems in the same context, but whatever the player chooses, the right decision is ultimately made, leading to the next problem. You use some sort of sleight of hand, such as “a supervisor catches the mistake and rectifies it, informing you…” to make it all ok. Or, you can terminate out and have to restart if you make the wrong decision at any point. These are a step up in terms of showing the more complex consequences, but are a bit unrealistic. There’s some learning power here, but not as much as is possible. I have used them as sort of multiple mini-scenarios with content in between, and the same story is used for the next choice, which at least made a nice flow. Cathy Moore suggests these are valuable for novices, and I think it’s also useful if everyone needs to receive the same ‘test’ in some accreditation environment to be fair and balanced (though in a competency-based world they’d be better off with the full game).

Then there’s the full branching scenario (which I called contingent scenarios in the book, because the consequences and even new decisions are contingent on your choices). That is, you see different opportunities depending on your choice. If you make one decision, the subsequent ones are different. If you don’t shut down the network right away, for instance, the consequences are different (perhaps a breach) than if you do (you get the VP mad). This, of course, is much more like the real world. The only difference between this and a serious game is that the contingencies in the world are hard-wired in the branches, not captured in a separate model (rules and variables). This is easier, but it gets tough to track if you have too many branches. And the lack of an engine limits the replay and ability to have randomness. Of course, you can make several of these.

Then there’s the full branching scenario (which I called contingent scenarios in the book, because the consequences and even new decisions are contingent on your choices). That is, you see different opportunities depending on your choice. If you make one decision, the subsequent ones are different. If you don’t shut down the network right away, for instance, the consequences are different (perhaps a breach) than if you do (you get the VP mad). This, of course, is much more like the real world. The only difference between this and a serious game is that the contingencies in the world are hard-wired in the branches, not captured in a separate model (rules and variables). This is easier, but it gets tough to track if you have too many branches. And the lack of an engine limits the replay and ability to have randomness. Of course, you can make several of these.

So the problem I had with the article that triggered this post is that their generic model looked like a mini-scenario, and nowhere did they show the full concept of a real branching scenario. Further, their example was really a linear scenario, not a branching scenario. And I realize this may seem like an ‘angels dancing on the head of a pin’, but I think it’s important to make distinctions when they affect the learning outcome, so you can more clearly make a choice that reflects the goal you are trying to achieve.

To their credit, that they were pushing for contextualized decision making at all is a major win, so I don’t want to quibble too much. Moving our learning practice/assessment/activity to more contextualized performance is a good thing. Still, I hope this elaboration is useful to get more nuanced solutions. Learning design really can’t be treated as a paint-by-numbers exercise, you really should know what you’re doing!

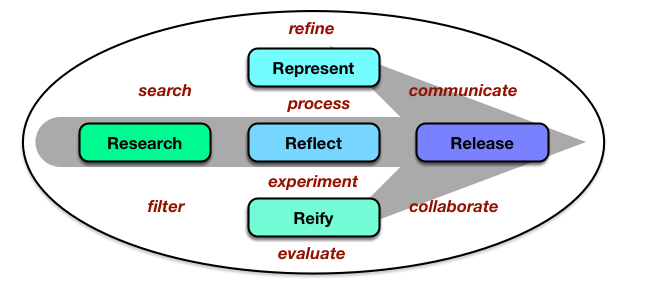

The core is the 5 R’s: Researching the opportunities, processing your explorations by either Representing them or putting them into practice (Reify) and Reflecting on those, and then Releasing them. And of course it’s recursive: this is a release of my representation of some ideas I’ve been researching, right? This is very much based on Harold Jarche’s

The core is the 5 R’s: Researching the opportunities, processing your explorations by either Representing them or putting them into practice (Reify) and Reflecting on those, and then Releasing them. And of course it’s recursive: this is a release of my representation of some ideas I’ve been researching, right? This is very much based on Harold Jarche’s

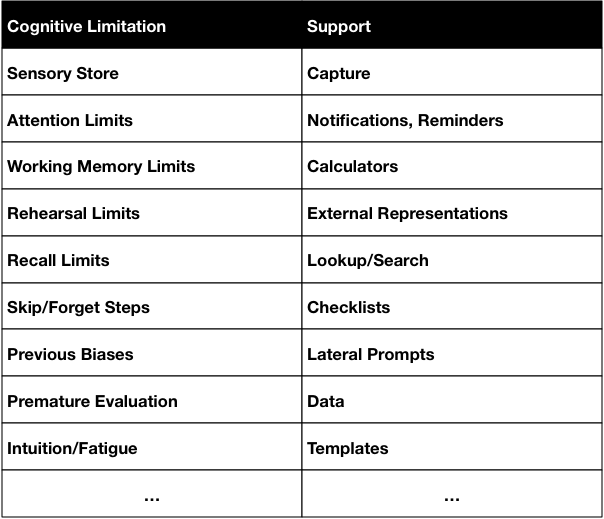

So, for instance, our senses capture incoming signals in a sensory store. Which has interesting properties that it has almost an unlimited capacity, but for only a very short time. And there is no way all of it can get into our working memory, so what happens is that what we attend to is what we have access to. So we can’t recall what we perceive accurately. However, technology (camera, microphone, sensors) can recall it all perfectly. So making capture capabilities available is a powerful support.

So, for instance, our senses capture incoming signals in a sensory store. Which has interesting properties that it has almost an unlimited capacity, but for only a very short time. And there is no way all of it can get into our working memory, so what happens is that what we attend to is what we have access to. So we can’t recall what we perceive accurately. However, technology (camera, microphone, sensors) can recall it all perfectly. So making capture capabilities available is a powerful support. This is similar to the way I’d seen Palm talk about the

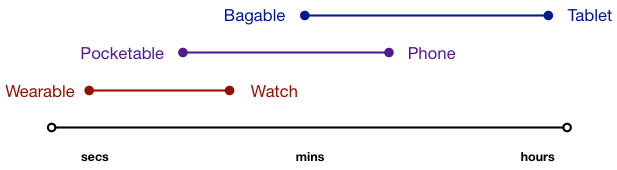

This is similar to the way I’d seen Palm talk about the