I was reflecting on a few things on terminology, buzzwords and branding in particular. And, as usual, learning out loud, here are my reflections.

So I’ve been known to take a bit of a blade to buzzwords (c.f. microlearning). And, I reckon there’s a distinction between vocabulary and hype. Further, I get the need for branding (and have been slack on my own part). So, here I talk about buzzwords and branding.

First, vocabulary is important. I’m a stickler (I’m sure some would say pedantic ;) about conceptual clarity. We need to have clear language to distinguish between different concepts. (You shouldn’t say ‘cat’ when you mean ‘dog’, someone’s likely to get a wee bit confused!)

And, to be clear, there’s internal and external vocabulary. For instance, other people don’t really care about objectives, they just want outcomes. This internal vocabulary can be shortcuts, and help us minimize what we need to say to still communicate. Brevity is the soul of wit, after all.

And then there’s hype. The distinction, I reckon, is when we start tossing in buzzwords that are new, drawn from elsewhere, and promise great things. Adaptive and neuro- are two examples of buzzphrases that are open to interpretation but sound intriguing. Yet they require careful examination.

Then, there’s branding. You attach a label to something to identify it specifically. Harold Jarche’s Personal Knowledge Mastery (PKM), for instance, is a brand for a framework. So, too, would be Michael Allen’s SAM (Successive Approximation Model) and CCAF (Context-Challenge-Activity-Feedback). They’re ways to package up good ideas. And of course, t0 take ownership.

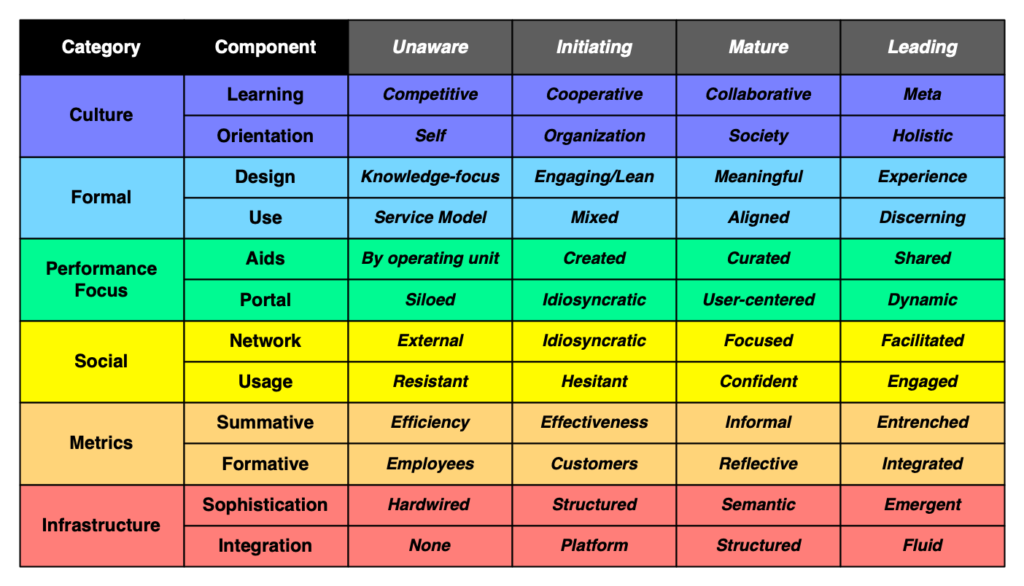

This latter step, I confess, I’ve failed on. The alignment in Engaging Learning and the different categories of mobile are two places I dropped the ball. I recently tried a brief attempt to remedy another, when I released the Performance Ecosystem Maturity Model.

I do have the 4C’s of Mobile, but while that turns out to be useful, it’s not the most important characterization. In a conversation with someone the other day, he asked what I called the mobile framework I mentioned and he found useful. And I didn’t have an answer. I’ve talked about it before, but I didn’t label it. And yet it’s kind of the most important way to look at mobile! I use it as the organizing framework when I talk about mobile (really, the performance ecosystem):

- Augmenting formal learning

- Performance support (mobile’s natural niche)

- Social (more the informal)

- Contextual (mobile’s unique opportunity)

I wasn’t sure what to brand this, so for the moment it’s the Four Modes of mLearning (4M? 4MM?).

And for games, that alignment I mentioned I briefly termed the EEA: Effectiveness-Engagement Alignment. The point is that the elements that lead to effective education practice, and the ones that lead to engaging experiences, have a perfect alignment. It’s been a good basis for design for me. But, again, that labeling came more than a decade after the book first came out.

Ok, so I was counting on the ‘Quinnovation’ branding. And that’s worked, but it’s not quite enough to hang products on. So…I’m working on it. (And it may be that having ‘Learnlets’ separate from Quinnovation is another self-inflicted impediment!)

Still, I think it’s important to distinguish between buzzwords and branding. And they shouldn’t be the same (trademarking ‘microlearning’, anyone ;). Again, vocabulary is important, for clarity, not hype. And branding is good for attribution. But they’re not the same thing. Those are my thoughts, what are yours?