How do you know what’s dubious research? There are lots of signals, more than I can cover in one post. However, a recent discovery serves as an example to illustrate some useful signals. I was trying to recall a paper I recently read, which suggested that reading is better than video for comprehending issues. Whether that’s true or not isn’t the issue. What is the issue is that in my search, I came across an article that really violated a number of principles. As I am wont to do, let’s briefly talk about bad research.

The title of the article (paraphrasing) was “Research confirms that video is superior to text”. Sure, that could be the case! (Actually the results say, not surprisingly, that one media’s better for some things, and another’s better at other; BTW, one of our great translators of research to practice, Patti Shank, has a series of articles on video that’s worth paying attention to.) Still, this article claimed to have a definitive statement about at least one study. However, when I looked at it, there were several problems.

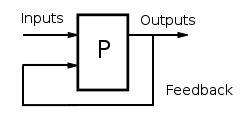

First, the study was a survey asking instructors what they thought of video. That’s not the same as an experimental study! A good study would choose some appropriate content, and then have equivalent versions in text and video, and then have a comprehension test. (BTW, these experiments have been done.) Asking opinions, even of experts, isn’t quite as good. And these weren’t experts, they were just a collection of instructors. They might have valid opinions, but their expertise wasn’t a basis for deciding.

Worse, the folks conducting the study had. a. video. platform. Sorry, that’s not an unbiased observer. They have a vested interest in the outcome. What we want is an impartial evaluation. This simply couldn’t be it. Not least, the author was the CEO of the platform.

It got worse. There was also a citation of the unjustified claim that images are processed 60K times better than text, yet the source of that claim hasn’t been found! They also cited learning styles! Citing unjustified claims isn’t a good practice in sound research. (For instance, when reviewing articles, I used to recommend rejecting them if they talked learning styles.) Yes, it wasn’t a research article on it’s own, but…I think misleading folks isn’t justified in any article (unless it’s illustrative and you then correct the situation).

Look, you can find valuable insights in lots of unexpected places, and in lots of unexpected ways. (I talk about ‘business significance’ can be as useful as statistical significance.) However, an author with a vested interest, using an inappropriate method, to make claims that are supported by debunked data, isn’t it. Please, be careful out there!